“Fragments” of Yandex’s codebase leaked on-line final week. Very similar to Google, Yandex is a platform with many facets akin to electronic mail, maps, a taxi service, and so forth. The code leak featured chunks of all of it.

Based on the documentation therein, Yandex’s codebase was folded into one giant repository known as Arcadia in 2013. The leaked codebase is a subset of all initiatives in Arcadia and we discover a number of elements in it associated to the search engine within the “Kernel,” “Library,” “Robotic,” “Search,” and “ExtSearch” archives.

The transfer is wholly unprecedented. Not because the AOL search question information of 2006 has one thing so materials associated to an internet search engine entered the general public area.

Though we’re lacking the information and lots of recordsdata which might be referenced, that is the primary occasion of a tangible have a look at how a contemporary search engine works on the code degree.

Personally, I can’t recover from how incredible the timing is to have the ability to truly see the code as I end my ebook “The Science of Search engine optimisation” the place I’m speaking about Info Retrieval, how fashionable engines like google truly work, and how you can construct a easy one your self.

In any occasion, I’ve been parsing by means of the code since final Thursday and any engineer will inform you that’s not sufficient time to grasp how every part works. So, I believe there will probably be a number of extra posts as I maintain tinkering.

Earlier than we soar in, I need to give a shout-out to Ben Wills at Ontolo for sharing the code with me, pointing me within the preliminary route of the place the great things is, and going forwards and backwards with me as we deciphered issues. Be happy to seize the spreadsheet with all the information we’ve compiled concerning the rating elements right here.

Additionally, shout out to Ryan Jones for digging in and sharing some key findings with me over IM.

OK, let’s get busy!

It’s not Google’s code, so why will we care?

Some imagine that reviewing this codebase is a distraction and that there’s nothing that can affect how they make enterprise selections. I discover that curious contemplating these are folks from the identical Search engine optimisation neighborhood that used the CTR mannequin from the 2006 AOL information because the business customary for modeling throughout any search engine for a few years to observe.

That mentioned, Yandex will not be Google. But the 2 are state-of-the-art net engines like google which have continued to remain on the slicing fringe of know-how.

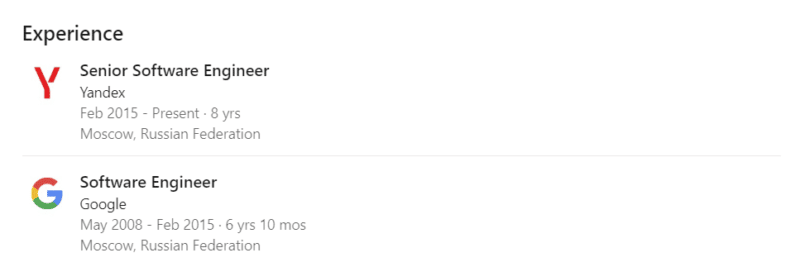

Software program engineers from each corporations go to the identical conferences (SIGIR, ECIR, and so forth) and share findings and improvements in Info Retrieval, Pure Language Processing/Understanding, and Machine Studying. Yandex additionally has a presence in Palo Alto and Google beforehand had a presence in Moscow.

A fast LinkedIn search uncovers just a few hundred engineers which have labored at each corporations, though we don’t know what number of of them have truly labored on Search at each corporations.

In a extra direct overlap, Yandex additionally makes utilization of Google’s open supply applied sciences which were important to improvements in Search like TensorFlow, BERT, MapReduce, and, to a a lot lesser extent, Protocol Buffers.

So, whereas Yandex is definitely not Google, it’s additionally not some random analysis challenge that we’re speaking about right here. There’s a lot we will find out about how a contemporary search engine is constructed from reviewing this codebase.

On the very least, we will disabuse ourselves of some out of date notions that also permeate Search engine optimisation instruments like text-to-code ratios and W3C compliance or the overall perception that Google’s 200 alerts are merely 200 particular person on and off-page options somewhat than lessons of composite elements that doubtlessly use hundreds of particular person measures.

Some context on Yandex’s structure

With out context or the flexibility to efficiently compile, run, and step by means of it, supply code could be very tough to make sense of.

Sometimes, new engineers get documentation, walk-throughs, and have interaction in pair programming to get onboarded to an current codebase. And, there may be some restricted onboarding documentation associated to establishing the construct course of within the docs archive. Nonetheless, Yandex’s code additionally references inner wikis all through, however these haven’t leaked and the commenting within the code can be fairly sparse.

Fortunately, Yandex does give some insights into its structure in its public documentation. There are additionally a few patents they’ve printed within the US that assist shed a bit of sunshine. Particularly:

As I’ve been researching Google for my ebook, I’ve developed a a lot deeper understanding of the construction of its rating methods by means of varied whitepapers, patents, and talks from engineers couched in opposition to my Search engine optimisation expertise. I’ve additionally spent lots of time sharpening my grasp of basic Info Retrieval greatest practices for net engines like google. It comes as no shock that there are certainly some greatest practices and similarities at play with Yandex.

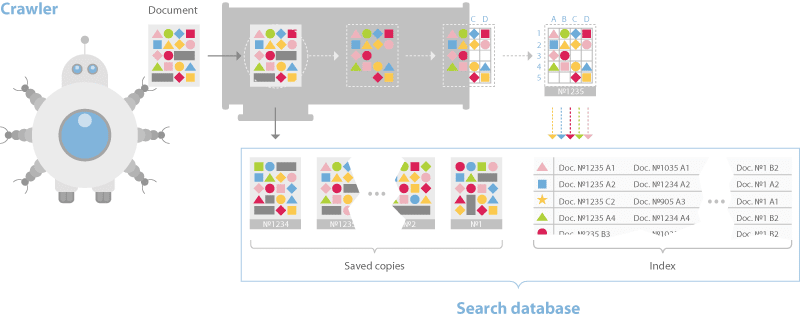

Yandex’s documentation discusses a dual-distributed crawler system. One for real-time crawling known as the “Orange Crawler” and one other for basic crawling.

Traditionally, Google is alleged to have had an index stratified into three buckets, one for housing real-time crawl, one for commonly crawled and one for not often crawled. This method is taken into account a greatest observe in IR.

Yandex and Google differ on this respect, however the basic thought of segmented crawling pushed by an understanding of replace frequency holds.

One factor price calling out is that Yandex has no separate rendering system for JavaScript. They are saying this of their documentation and, though they’ve Webdriver-based system for visible regression testing known as Gemini, they restrict themselves to text-based crawl.

The documentation additionally discusses a sharded database construction that breaks pages down into an inverted index and a doc server.

Similar to most different net engines like google the indexing course of builds a dictionary, caches pages, after which locations information into the inverted index such that bigrams and trigams and their placement within the doc is represented.

This differs from Google in that they moved to phrase-based indexing, that means n-grams that may be for much longer than trigrams a very long time in the past.

Nonetheless, the Yandex system makes use of BERT in its pipeline as properly, so in some unspecified time in the future paperwork and queries are transformed to embeddings and nearest neighbor search methods are employed for rating.

The rating course of is the place issues start to get extra fascinating.

Yandex has a layer known as Metasearch the place cached standard search outcomes are served after they course of the question. If the outcomes aren’t discovered there, then the search question is distributed to a collection of hundreds of various machines within the Primary Search layer concurrently. Every builds a posting listing of related paperwork then returns it to MatrixNet, Yandex’s neural community utility for re-ranking, to construct the SERP.

Primarily based on movies whereby Google engineers have talked about Search’s infrastructure, that rating course of is kind of much like Google Search. They speak about Google’s tech being in shared environments the place varied functions are on each machine and jobs are distributed throughout these machines primarily based on the supply of computing energy.

One of many use circumstances is precisely this, the distribution of queries to an assortment of machines to course of the related index shards shortly. Computing the posting lists is the primary place that we have to think about the rating elements.

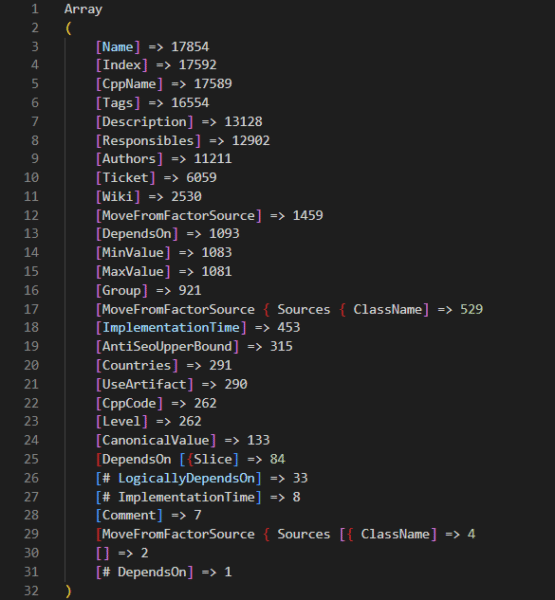

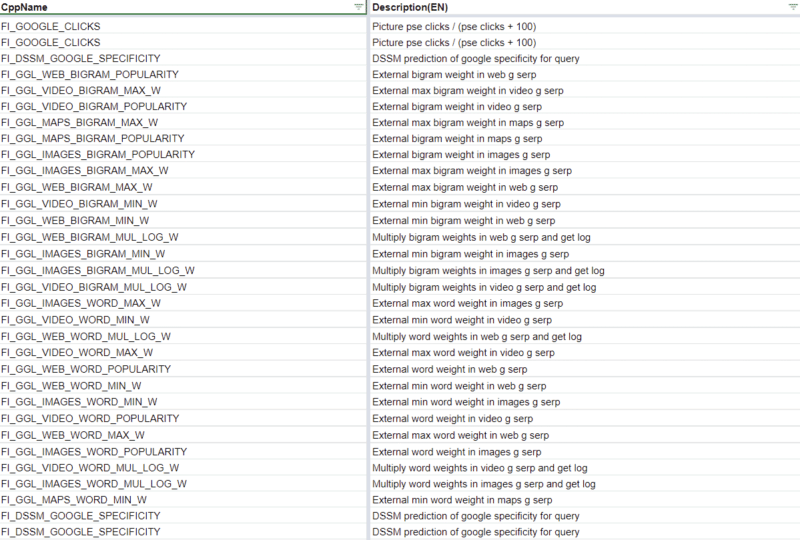

There are 17,854 rating elements within the codebase

On the Friday following the leak, the inimitable Martin MacDonald eagerly shared a file from the codebase known as web_factors_info/factors_gen.in. The file comes from the “Kernel” archive within the codebase leak and options 1,922 rating elements.

Naturally, the Search engine optimisation neighborhood has run with that quantity and that file to eagerly unfold information of the insights therein. Many of us have translated the descriptions and constructed instruments or Google Sheets and ChatGPT to make sense of the information. All of that are nice examples of the facility of the neighborhood. Nonetheless, the 1,922 represents simply one among many units of rating elements within the codebase.

A deeper dive into the codebase reveals that there are quite a few rating issue recordsdata for various subsets of Yandex’s question processing and rating methods.

Combing by means of these, we discover that there are literally 17,854 rating elements in complete. Included in these rating elements are a wide range of metrics associated to:

- Clicks.

- Dwell time.

- Leveraging Yandex’s Google Analytics equal, Metrika.

There may be additionally a collection of Jupyter notebooks which have an extra 2,000 elements exterior of these within the core code. Presumably, these Jupyter notebooks symbolize exams the place engineers are contemplating further elements so as to add to the codebase. Once more, you possibly can assessment all of those options with metadata that we collected from throughout the codebase at this hyperlink.

Yandex’s documentation additional clarifies that they’ve three lessons of rating elements: Static, Dynamic, and people associated particularly to the person’s search and the way it was carried out. In their very own phrases:

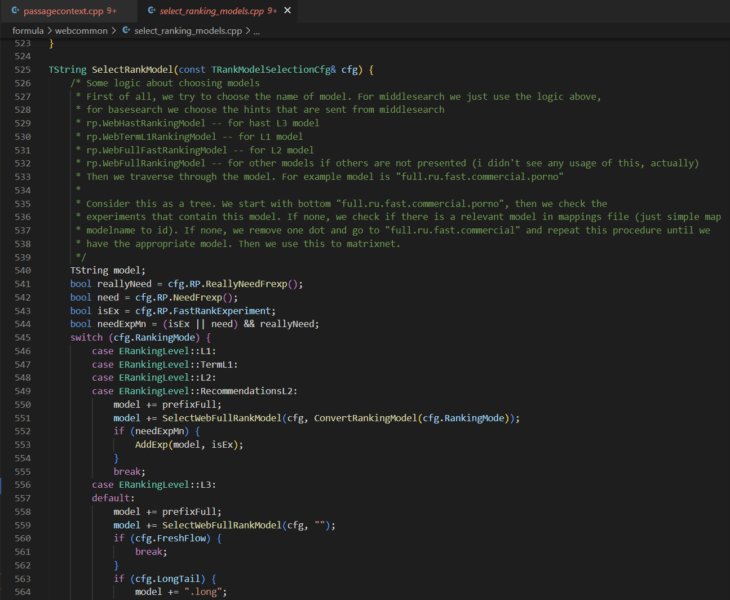

Within the codebase these are indicated within the rank elements recordsdata with the tags TG_STATIC and TG_DYNAMIC. The search associated elements have a number of tags akin to TG_QUERY_ONLY, TG_QUERY, TG_USER_SEARCH, and TG_USER_SEARCH_ONLY.

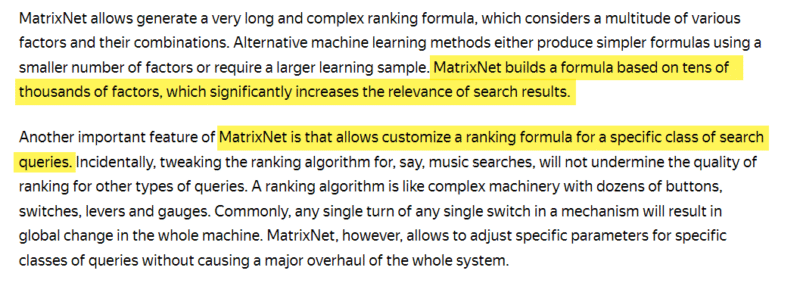

Whereas we now have uncovered a possible 18k rating elements to select from, the documentation associated to MatrixNet signifies that scoring is constructed from tens of hundreds of things and customised primarily based on the search question.

This means that the rating atmosphere is very dynamic, much like that of Google atmosphere. Based on Google’s “Framework for evaluating scoring features” patent, they’ve lengthy had one thing comparable the place a number of features are run and the very best set of outcomes are returned.

Lastly, contemplating that the documentation references tens of hundreds of rating elements, we must also needless to say there are numerous different recordsdata referenced within the code which might be lacking from the archive. So, there may be seemingly extra occurring that we’re unable to see. That is additional illustrated by reviewing the photographs within the onboarding documentation which exhibits different directories that aren’t current within the archive.

As an example, I believe there may be extra associated to the DSSM within the /semantic-search/ listing.

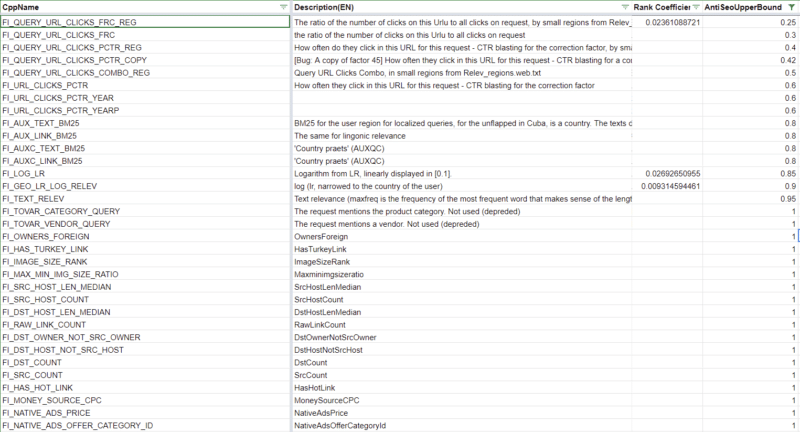

The preliminary weighting of rating elements

I first operated below the idea that the codebase didn’t have any weights for the rating elements. Then I used to be shocked to see that the nav_linear.h file within the /search/relevance/ listing options the preliminary coefficients (or weights) related to rating elements on full show.

This part of the code highlights 257 of the 17,000+ rating elements we’ve recognized. (Hat tip to Ryan Jones for pulling these and lining them up with the rating issue descriptions.)

For readability, whenever you consider a search engine algorithm, you’re most likely considering of an extended and complicated mathematical equation by which each and every web page is scored primarily based on a collection of things. Whereas that’s an oversimplification, the next screenshot is an excerpt of such an equation. The coefficients symbolize how essential every issue is and the ensuing computed rating is what could be used to attain selecter pages for relevance.

These values being hard-coded means that that is definitely not the one place that rating occurs. As a substitute, this operate is probably the place the preliminary relevance scoring is finished to generate a collection of posting lists for every shard being thought-about for rating. Within the first patent listed above, they speak about this as an idea of query-independent relevance (QIR) which then limits paperwork previous to reviewing them for query-specific relevance (QSR).

The ensuing posting lists are then handed off to MatrixNet with question options to match in opposition to. So whereas we don’t know the specifics of the downstream operations (but), these weights are nonetheless useful to grasp as a result of they inform you the necessities for a web page to be eligible for the consideration set.

Nonetheless, that brings up the following query: what will we find out about MatrixNet?

There may be neural rating code within the Kernel archive and there are quite a few references to MatrixNet and “mxnet” in addition to many references to Deep Structured Semantic Fashions (DSSM) all through the codebase.

The outline of one of many FI_MATRIXNET rating issue signifies that MatrixNet is utilized to all elements.

Issue {

Index: 160

CppName: “FI_MATRIXNET”

Identify: “MatrixNet”

Tags: [TG_DOC, TG_DYNAMIC, TG_TRANS, TG_NOT_01, TG_REARR_USE, TG_L3_MODEL_VALUE, TG_FRESHNESS_FROZEN_POOL]

Description: “MatrixNet is utilized to all elements – the method”

}

There’s additionally a bunch of binary recordsdata that could be the pre-trained fashions themselves, nevertheless it’s going to take me extra time to unravel these facets of the code.

What is instantly clear is that there are a number of ranges to rating (L1, L2, L3) and there may be an assortment of rating fashions that may be chosen at every degree.

The selecting_rankings_model.cpp file means that totally different rating fashions could also be thought-about at every layer all through the method. That is principally how neural networks work. Every degree is a side that completes operations and their mixed computations yield the re-ranked listing of paperwork that finally seems as a SERP. I’ll observe up with a deep dive on MatrixNet when I’ve extra time. For those who want a sneak peek, try the Search end result ranker patent.

For now, let’s check out some fascinating rating elements.

High 5 negatively weighted preliminary rating elements

The next is an inventory of the very best negatively weighted preliminary rating elements with their weights and a short clarification primarily based on their descriptions translated from Russian.

- FI_ADV: -0.2509284637 -This issue determines that there’s promoting of any variety on the web page and points the heaviest weighted penalty for a single rating issue.

- FI_DATER_AGE: -0.2074373667 – This issue is the distinction between the present date and the date of the doc decided by a dater operate. The worth is 1 if the doc date is similar as in the present day, 0 if the doc is 10 years or older, or if the date will not be outlined. This means that Yandex has a desire for older content material.

- FI_QURL_STAT_POWER: -0.1943768768 – This issue is the variety of URL impressions because it pertains to the question. It appears as if they need to demote a URL that seems in lots of searches to advertise range of outcomes.

- FI_COMM_LINKS_SEO_HOSTS: -0.1809636391 – This issue is the share of inbound hyperlinks with “business” anchor textual content. The issue reverts to 0.1 if the proportion of such hyperlinks is greater than 50%, in any other case, it’s set to 0.

- FI_GEO_CITY_URL_REGION_COUNTRY: -0.168645758 – This issue is the geographical coincidence of the doc and the nation that the person searched from. This one doesn’t fairly make sense if 1 signifies that the doc and the nation match.

In abstract, these elements point out that, for the very best rating, you must:

- Keep away from adverts.

- Replace older content material somewhat than make new pages.

- Ensure most of your hyperlinks have branded anchor textual content.

Every part else on this listing is past your management.

High 5 positively weighted preliminary rating elements

To observe up, right here’s an inventory of the very best weighted optimistic rating elements.

- FI_URL_DOMAIN_FRACTION: +0.5640952971 – This issue is an odd masking overlap of the question versus the area of the URL. The instance given is Chelyabinsk lottery which abbreviated as chelloto. To compute this worth, Yandex discover three-letters which might be coated (che, hel, lot, olo), see what quantity of all of the three-letter mixtures are within the area title.

- FI_QUERY_DOWNER_CLICKS_COMBO: +0.3690780393 – The outline of this issue is that’s “cleverly mixed of FRC and pseudo-CTR.” There is no such thing as a speedy indication of what FRC is.

- FI_MAX_WORD_HOST_CLICKS: +0.3451158835 – This issue is the clickability of an important phrase within the area. For instance, for all queries in which there’s the phrase “wikipedia” click on on wikipedia pages.

- FI_MAX_WORD_HOST_YABAR: +0.3154394573 – The issue description says “essentially the most attribute question phrase similar to the location, in line with the bar.” I’m assuming this implies the key phrase most looked for in Yandex Toolbar related to the location.

- FI_IS_COM: +0.2762504972 – The issue is that the area is a .COM.

In different phrases:

- Play phrase video games together with your area.

- Ensure it’s a dot com.

- Encourage folks to seek for your goal key phrases within the Yandex Bar.

- Preserve driving clicks.

There are many sudden preliminary rating elements

What’s extra fascinating within the preliminary weighted rating elements are the sudden ones. The next is an inventory of seventeen elements that stood out.

- FI_PAGE_RANK: +0.1828678331 – PageRank is the seventeenth highest weighted think about Yandex. They beforehand eliminated hyperlinks from their rating system completely, so it’s not too surprising how low it’s on the listing.

- FI_SPAM_KARMA: +0.00842682963 – The Spam karma is called after “antispammers” and is the probability that the host is spam; primarily based on Whois info

- FI_SUBQUERY_THEME_MATCH_A: +0.1786465163 – How carefully the question and the doc match thematically. That is the nineteenth highest weighted issue.

- FI_REG_HOST_RANK: +0.1567124399 – Yandex has a bunch (or area) rating issue.

- FI_URL_LINK_PERCENT: +0.08940421124 – Ratio of hyperlinks whose anchor textual content is a URL (somewhat than textual content) to the full variety of hyperlinks.

- FI_PAGE_RANK_UKR: +0.08712279101 – There’s a particular Ukranian PageRank

- FI_IS_NOT_RU: +0.08128946612 – It’s a optimistic factor if the area will not be a .RU. Apparently, the Russian search engine doesn’t belief Russian websites.

- FI_YABAR_HOST_AVG_TIME2: +0.07417219313 – That is the typical dwell time as reported by YandexBar

- FI_LERF_LR_LOG_RELEV: +0.06059448504 – That is hyperlink relevance primarily based on the standard of every hyperlink

- FI_NUM_SLASHES: +0.05057609417 – The variety of slashes within the URL is a rating issue.

- FI_ADV_PRONOUNS_PORTION: -0.001250755075 – The proportion of pronoun nouns on the web page.

- FI_TEXT_HEAD_SYN: -0.01291908335 – The presence of [query] phrases within the header, making an allowance for synonyms

- FI_PERCENT_FREQ_WORDS: -0.02021022114 – The proportion of the variety of phrases, which might be the 200 most frequent phrases of the language, from the variety of all phrases of the textual content.

- FI_YANDEX_ADV: -0.09426121965 – Getting extra particular with the distaste in the direction of adverts, Yandex penalizes pages with Yandex adverts.

- FI_AURA_DOC_LOG_SHARED: -0.09768630485 – The logarithm of the variety of shingles (areas of textual content) within the doc that aren’t distinctive.

- FI_AURA_DOC_LOG_AUTHOR: -0.09727752961 – The logarithm of the variety of shingles on which this proprietor of the doc is acknowledged because the writer.

- FI_CLASSIF_IS_SHOP: -0.1339319854 – Apparently, Yandex goes to present you much less love in case your web page is a retailer.

The first takeaway from reviewing these odd rankings elements and the array of these obtainable throughout the Yandex codebase is that there are numerous issues that might be a rating issue.

I believe that Google’s reported “200 alerts” are literally 200 lessons of sign the place every sign is a composite constructed of many different elements. In a lot the identical method that Google Analytics has dimensions with many metrics related, Google Search seemingly has lessons of rating alerts composed of many options.

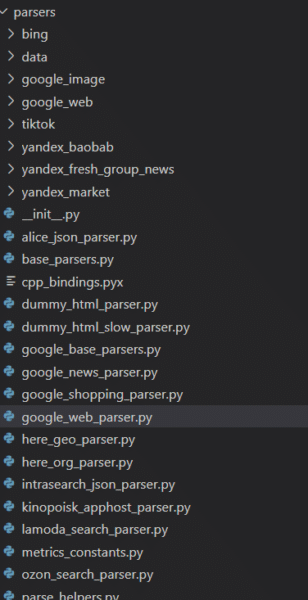

Yandex scrapes Google, Bing, YouTube and TikTok

The codebase additionally reveals that Yandex has many parsers for different web sites and their respective companies. To Westerners, essentially the most notable of these are those I’ve listed within the heading above. Moreover, Yandex has parsers for a wide range of companies that I used to be unfamiliar with in addition to these for its personal companies.

What is instantly evident, is that the parsers are function full. Each significant part of the Google SERP is extracted. Actually, anybody that is likely to be contemplating scraping any of those companies may do properly to assessment this code.

There may be different code that signifies Yandex is utilizing some Google information as a part of the DSSM calculations, however the 83 Google named rating elements themselves make it clear that Yandex has leaned on the Google’s outcomes fairly closely.

Clearly, Google would by no means pull the Bing transfer of copying one other search engine’s outcomes nor be reliant on one for core rating calculations.

Yandex has anti-Search engine optimisation higher bounds for some rating elements

315 rating elements have thresholds at which any computed worth past that signifies to the system that that function of the web page is over-optimized. 39 of those rating elements are a part of the initially weighted elements that will maintain a web page from being included within the preliminary postings listing. Yow will discover these within the spreadsheet I’ve linked to above by filtering for the Rank Coefficient and the Anti-Search engine optimisation column.

It’s not far-fetched conceptually to count on that each one fashionable engines like google set thresholds on sure elements that SEOs have traditionally abused akin to anchor textual content, CTR, or key phrase stuffing. As an example, Bing was mentioned to leverage the abusive utilization of the meta key phrases as a detrimental issue.

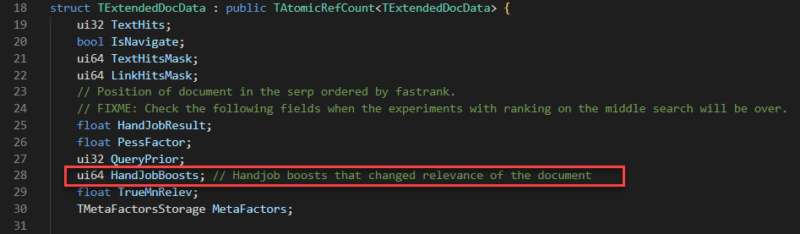

Yandex boosts “Very important Hosts”

Yandex has a collection of boosting mechanisms all through its codebase. These are synthetic enhancements to sure paperwork to make sure they rating greater when being thought-about for rating.

Beneath is a remark from the “boosting wizard” which means that smaller recordsdata profit greatest from the boosting algorithm.

There are a number of kinds of boosts; I’ve seen one increase associated to hyperlinks and I’ve additionally seen a collection of “HandJobBoosts” which I can solely assume is a bizarre translation of “guide” modifications.

One in every of these boosts I discovered significantly fascinating is said to “Very important Hosts.” The place a significant host could be any website specified. Particularly talked about within the variables is NEWS_AGENCY_RATING which leads me to imagine that Yandex provides a lift that biases its outcomes to sure information organizations.

With out entering into geopolitics, that is very totally different from Google in that they’ve been adamant about not introducing biases like this into their rating methods.

The construction of the doc server

The codebase reveals how paperwork are saved in Yandex’s doc server. That is useful in understanding {that a} search engine doesn’t merely make a replica of the web page and put it aside to its cache, it’s capturing varied options as metadata to then use within the downstream rankings course of.

The screenshot beneath highlights a subset of these options which might be significantly fascinating. Different recordsdata with SQL queries counsel that the doc server has nearer to 200 columns together with the DOM tree, sentence lengths, fetch time, a collection of dates, and antispam rating, redirect chain, and whether or not or not the doc is translated. Essentially the most full listing I’ve come throughout is in /robotic/rthub/yql/protos/web_page_item.proto.

What’s most fascinating within the subset right here is the variety of simhashes which might be employed. Simhashes are numeric representations of content material and engines like google use them for lightning quick comparability for the willpower of duplicate content material. There are numerous situations within the robotic archive that point out duplicate content material is explicitly demoted.

Additionally, as a part of the indexing course of, the codebase options TF-IDF, BM25, and BERT in its textual content processing pipeline. It’s not clear why all of those mechanisms exist within the code as a result of there may be some redundancy in utilizing all of them.

Hyperlink elements and prioritization

How Yandex handles hyperlink elements is especially fascinating as a result of they beforehand disabled their affect altogether. The codebase additionally reveals lots of details about hyperlink elements and the way hyperlinks are prioritized.

Yandex’s hyperlink spam calculator has 89 elements that it seems at. Something marked as SF_RESERVED is deprecated. The place offered, you’ll find the descriptions of those elements within the Google Sheet linked above.

Notably, Yandex has a bunch rank and a few scores that seem to dwell on long run after a website or web page develops a repute for spam.

One other factor Yandex does is assessment copy throughout a website and decide if there may be duplicate content material with these hyperlinks. This may be sitewide hyperlink placements, hyperlinks on duplicate pages, or just hyperlinks with the identical anchor textual content coming from the identical website.

This illustrates how trivial it’s to low cost a number of hyperlinks from the identical supply and clarifies how essential it’s to focus on extra distinctive hyperlinks from extra various sources.

What can we apply from Yandex to what we find out about Google?

Naturally, that is nonetheless the query on everybody’s thoughts. Whereas there are definitely many analogs between Yandex and Google, honestly, solely a Google Software program Engineer engaged on Search may definitively reply that query.

But, that’s the flawed query.

Actually, this code ought to assist us develop our eager about fashionable search. A lot of the collective understanding of search is constructed from what the Search engine optimisation neighborhood realized within the early 2000s by means of testing and from the mouths of search engineers when search was far much less opaque. That sadly has not stored up with the fast tempo of innovation.

Insights from the various options and elements of the Yandex leak ought to yield extra hypotheses of issues to check and think about for rating in Google. They need to additionally introduce extra issues that may be parsed and measured by Search engine optimisation crawling, hyperlink evaluation, and rating instruments.

As an example, a measure of the cosine similarity between queries and paperwork utilizing BERT embeddings might be useful to grasp versus competitor pages because it’s one thing that fashionable engines like google are themselves doing.

A lot in the way in which the AOL Search logs moved us from guessing the distribution of clicks on SERP, the Yandex codebase strikes us away from the summary to the concrete and our “it relies upon” statements could be higher certified.

To that finish, this codebase is a present that can carry on giving. It’s solely been a weekend and we’ve already gleaned some very compelling insights from this code.

I anticipate some formidable Search engine optimisation engineers with way more time on their palms will maintain digging and possibly even fill in sufficient of what’s lacking to compile this factor and get it working. I additionally imagine engineers on the totally different engines like google are additionally going by means of and parsing out improvements that they’ll study from and add to their methods.

Concurrently, Google legal professionals are most likely drafting aggressive stop and desist letters associated to all of the scraping.

I’m desirous to see the evolution of our area that’s pushed by the curious individuals who will maximize this chance.

However, hey, if getting insights from precise code will not be useful to you, you’re welcome to return to doing one thing extra essential like arguing about subdomains versus subdirectories.

Opinions expressed on this article are these of the visitor writer and never essentially Search Engine Land. Employees authors are listed right here.