JavaScript (JS) is extraordinarily fashionable within the ecommerce world as a result of it helps create a seamless and user-friendly expertise for consumers.

Take, as an illustration, loading gadgets on class pages, or dynamically updating merchandise on the location utilizing JS.

Whereas that is nice information for ecommerce websites, JavaScript poses a number of challenges for Website positioning professionals.

Google is constantly engaged on enhancing its search engine, and a giant a part of its effort is devoted to creating certain its crawlers can entry JavaScript content material.

However, guaranteeing that Google seamlessly crawls JS websites isn’t straightforward.

On this put up, I’ll share every thing you might want to learn about JS Website positioning for ecommerce and how one can enhance your natural efficiency.

Let’s start!

How JavaScript Works For Ecommerce Websites

When constructing an ecommerce website, builders use HTML for content material and group, CSS for design, and JavaScript for interplay with backend servers.

JavaScript performs three distinguished roles inside ecommerce web sites.

1. Including Interactivity To A Internet Web page

The target of including interactivity is to permit customers to see modifications based mostly on their actions, like scrolling or filling out varieties.

For example: a product picture modifications when the patron hovers the mouse over it. Or hovering the mouse makes the picture rotate 360 levels, permitting the patron to get a greater view of the product.

All of this enhances person expertise (UX) and helps consumers determine on their purchases.

JavaScript provides such interactivity to websites, permitting entrepreneurs to have interaction guests and drive gross sales.

2. Connecting To Backend Servers

JavaScript permits higher backend integration utilizing Asynchronous JavaScript (AJAX) and Extensible Markup Language (XML).

It permits net functions to ship and retrieve knowledge from the server asynchronously whereas upholding UX.

In different phrases, the method doesn’t intrude with the show or conduct of the web page.

In any other case, if guests wished to load one other web page, they must anticipate the server to reply with a brand new web page. That is annoying and may trigger consumers to depart the location.

So, JavaScript permits dynamic, backend-supported interactions – like updating an merchandise and seeing it up to date within the cart – straight away.

Equally, it powers the flexibility to tug and drop components on an online web page.

3. Internet Monitoring And Analytics

JavaScript presents real-time monitoring of web page views and heatmaps that inform you how far down individuals are studying your content material.

For example, it could inform you the place their mouse is or what they clicked (click on monitoring).

That is how JS powers monitoring person conduct and interplay on webpages.

How Do Search Bots Course of JS?

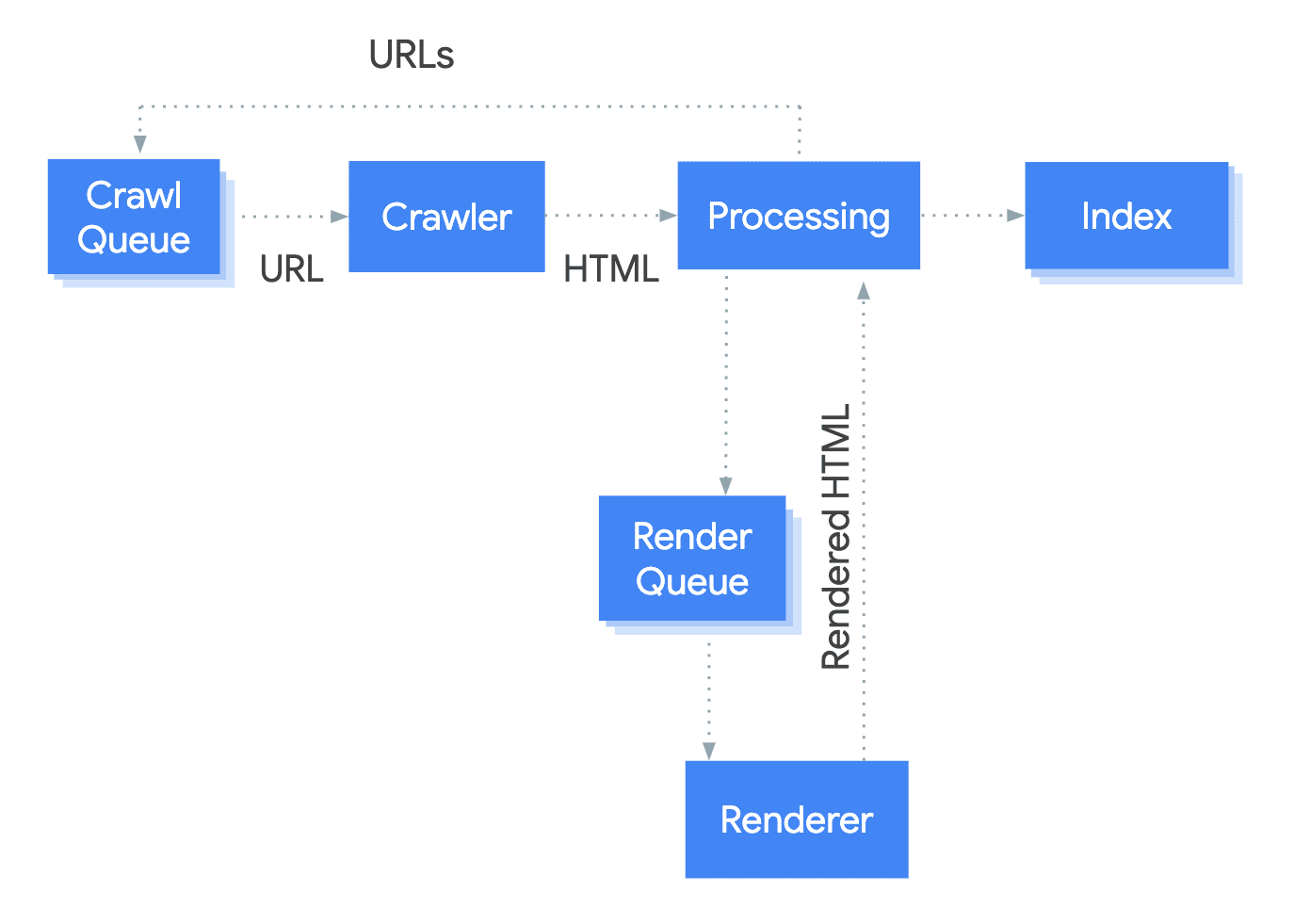

Google processes JS in three phases, particularly: crawling, rendering, and indexing.

Picture from Google Search Central, September 2022

Picture from Google Search Central, September 2022As you’ll be able to see on this picture, Google’s bots put the pages within the queue for crawling and rendering. Throughout this section, the bots scan the pages to evaluate new content material.

When a URL is retrieved from the crawl queue by sending an HTTP request, it first accesses your robots.txt file to verify in case you’ve permitted Google to crawl the web page.

If it’s disallowed, the bots will ignore it and never ship an HTTP request.

Within the second stage, rendering, the HTML, CSS, and JavaScript recordsdata are processed and reworked right into a format that may be simply listed by Google.

Within the remaining stage, indexing, the rendered content material is added to Google’s index, permitting it to seem within the SERPs.

Frequent JavaScript Website positioning Challenges With Ecommerce Websites

JavaScript crawling is much more advanced than conventional HTML websites.

The method is faster within the case of the latter.

Take a look at this fast comparability.

| Conventional HTML Website Crawling | JavaScript Crawling | ||

| 1 | Bots obtain the HTML file | 1 | Bots obtain the HTML file |

| 2 | They extract the hyperlinks so as to add them to their crawl queue | 2 | They discover no hyperlink within the supply code as a result of they’re solely injected after JS execution |

| 3 | They obtain the CSS recordsdata | 3 | Bots obtain CSS and JS recordsdata |

| 4 | They ship the downloaded sources to Caffeine, Google’s indexer | 4 | Bots use the Google Internet Rendering Service (WRS) to parse and execute JS |

| 5 | Voila! The pages are listed | 5 | WRS fetches knowledge from the database and exterior APIs |

| 6 | Content material is listed | ||

| 7 | Bots can lastly uncover new hyperlinks and add them to the crawl queue |

Thus, with JS-rich ecommerce websites, Google finds it powerful to index content material or uncover hyperlinks earlier than the web page is rendered.

In reality, in a webinar on find out how to migrate a web site to JavaScript, Sofiia Vatulyak, a famend JS Website positioning professional, shared,

“Although JavaScript presents a number of helpful options and saves sources for the online server, not all search engines like google and yahoo can course of it. Google wants time to render and index JS pages. Thus, implementing JS whereas upholding Website positioning is difficult.”

Listed below are the highest JS Website positioning challenges ecommerce entrepreneurs ought to pay attention to.

Restricted Crawl Funds

Ecommerce web sites typically have an enormous (and rising!) quantity of pages which are poorly organized.

These websites have in depth crawl price range necessities, and within the case of JS web sites, the crawling course of is prolonged.

Additionally, outdated content material, equivalent to orphan and zombie pages, may cause an enormous wastage of the crawl price range.

Restricted Render Funds

As talked about earlier, to have the ability to see the content material loaded by JS within the browser, search bots should render it. However rendering at scale calls for time and computational sources.

In different phrases, like a crawl price range, every web site has a render price range. If that price range is spent, the bot will depart, delaying the invention of content material and consuming further sources.

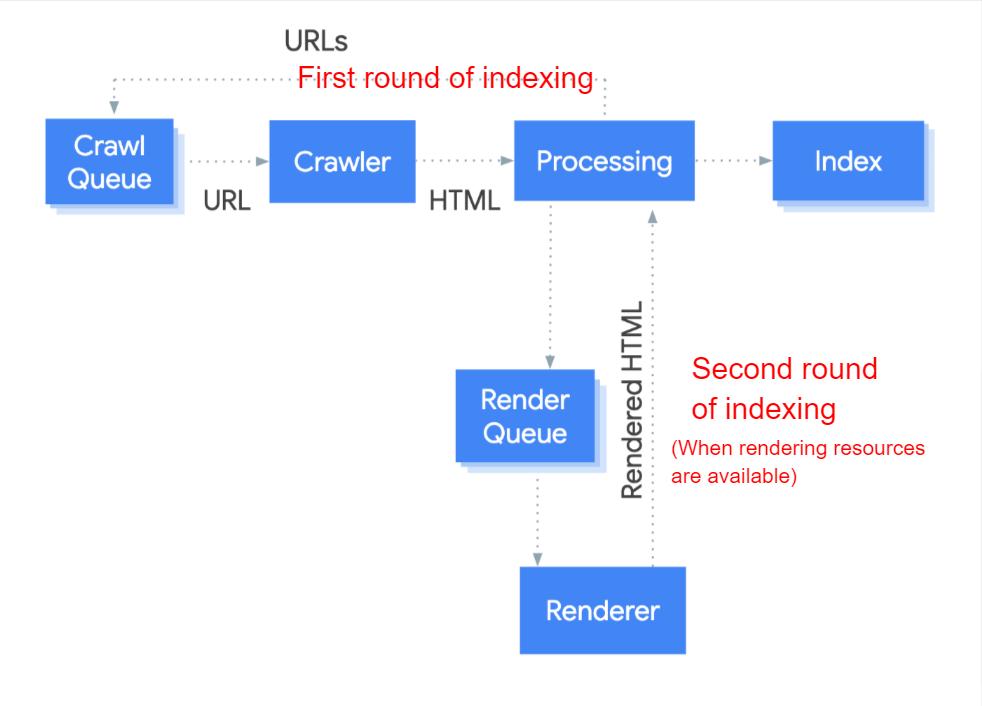

Google renders JS content material within the second spherical of indexing.

It’s essential to indicate your content material inside HTML, permitting Google to entry it.

Picture from Google Search Central, September 2022

Picture from Google Search Central, September 2022Go to the Examine factor in your web page and seek for among the content material. Should you can’t discover it there, search engines like google and yahoo could have hassle accessing it.

Troubleshooting Points For JavaScript Web sites Is Powerful

Most JS web sites face crawlability and obtainability points.

For example, JS content material limits a bot’s capability to navigate pages. This impacts its indexability.

Equally, bots can’t determine the context of the content material on a JS web page, thus limiting their capability to rank the web page for particular key phrases.

Such points make it powerful for ecommerce entrepreneurs to find out the rendering standing of their net pages.

In such a case, utilizing a sophisticated crawler or log analyzer might help.

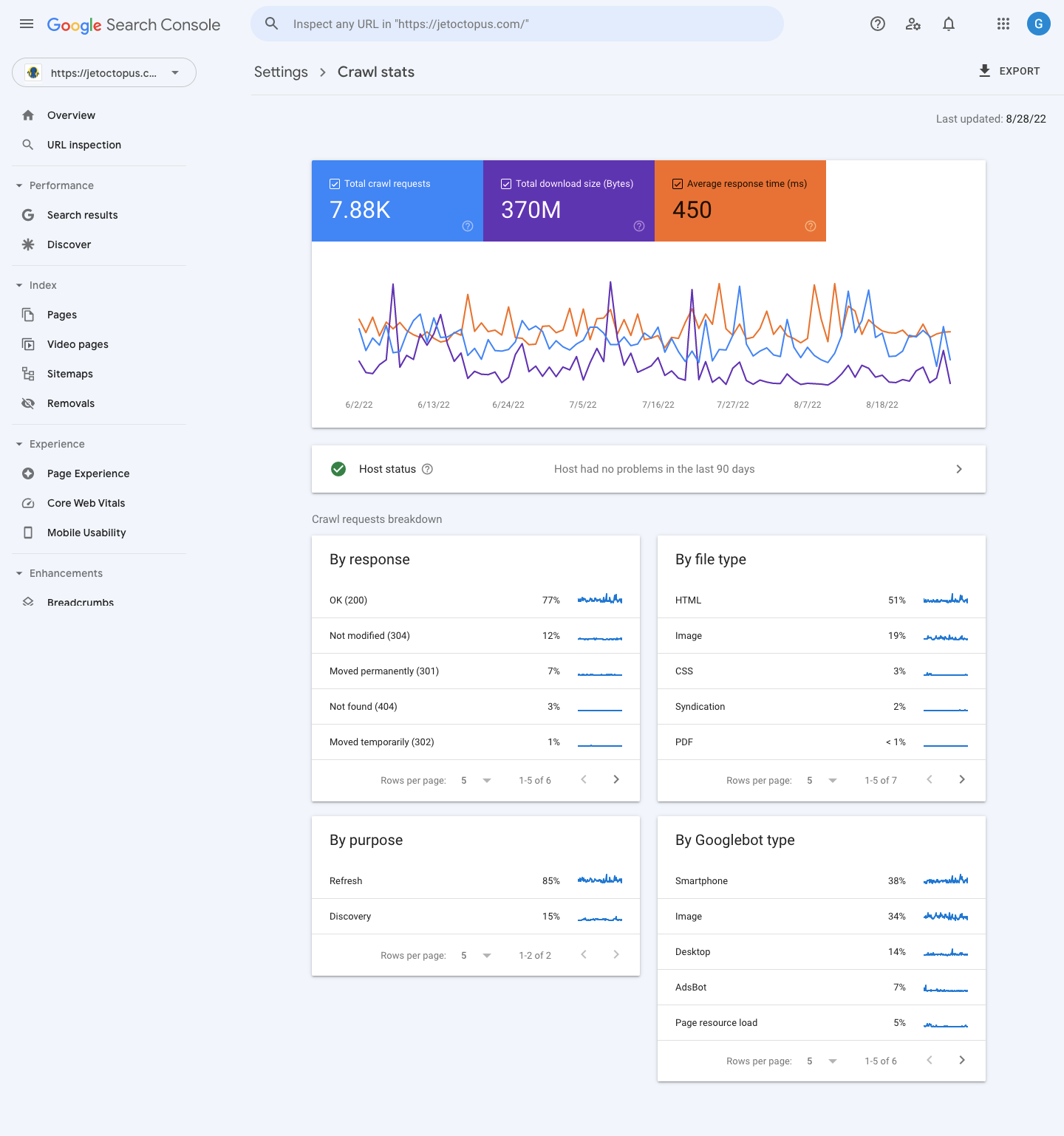

Instruments like Semrush Log File Analyzer, Google Search Console Crawl Stats, and JetOctopus, amongst others, provide a full-suite log administration resolution, permitting site owners to higher perceive how search bots work together with net pages.

JetOctopus, as an illustration, has JS rendering performance.

Take a look at this GIF that reveals how the software views JS pages as a Google bot.

Screenshot from JetOctopus, September 2022

Screenshot from JetOctopus, September 2022Equally, Google Search Console Crawl Stats shares a helpful overview of your website’s crawl efficiency.

Screenshot from Google Search Console Crawl Stats, September 2022

Screenshot from Google Search Console Crawl Stats, September 2022The crawl stats are sorted into:

- Kilobytes downloaded per day present the variety of kilobytes bots obtain every time they go to the web site.

- Pages crawled per day reveals the variety of pages the bots crawl per day (low, common, or excessive).

- Time spent downloading a web page tells you the period of time bots take to make an HTTP request for the crawl. Much less time taken means quicker crawling and indexing.

Shopper-Facet Rendering On Default

Ecommerce websites which are inbuilt JS frameworks like React, Angular, or Vue are, by default, set to client-side rendering (CSR).

With this setting, the bots won’t be able to see what’s on the web page, thus inflicting rendering and indexing points.

Massive And Unoptimized JS Recordsdata

JS code prevents essential web site sources from loading shortly. This negatively impacts UX and Website positioning.

High Optimization Ways For JavaScript Ecommerce Websites

1. Examine If Your JavaScript Has Website positioning Points

Listed below are three fast assessments to run on completely different web page templates of your website, particularly the homepage, class or product itemizing pages, product pages, weblog pages, and supplementary pages.

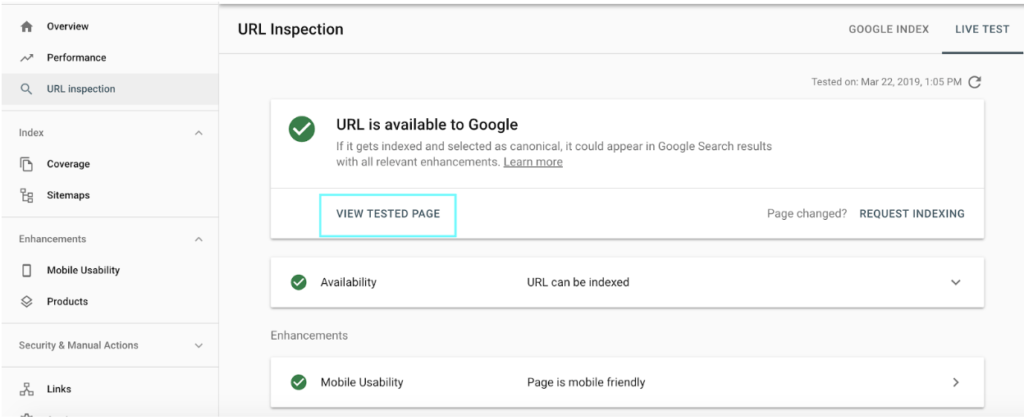

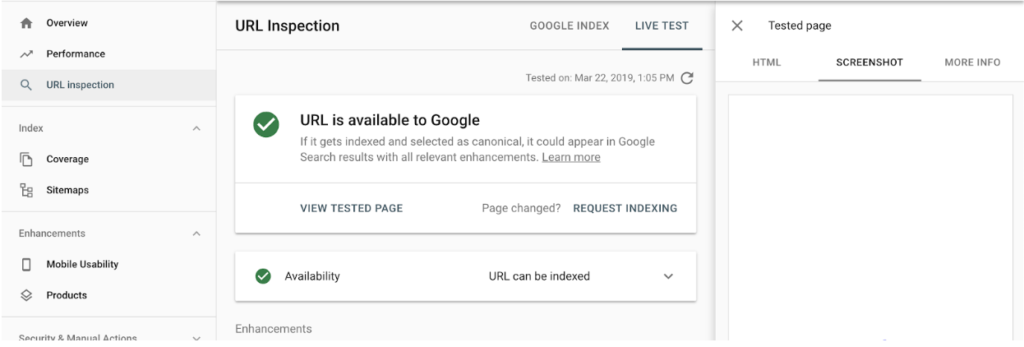

URL Inspection Software

Entry the Examine URL report in your Google Search Console.

Screenshot from Google Search Console, September 2022

Screenshot from Google Search Console, September 2022Enter the URL you wish to check.

Screenshot from Google Search Console, September 2022

Screenshot from Google Search Console, September 2022Subsequent, press View Examined Web page and transfer to the screenshot of the web page. Should you see this part clean (like on this screenshot), Google has points rendering this web page.

Screenshot from Google Search Console, September 2022

Screenshot from Google Search Console, September 2022Repeat these steps for all the related ecommerce web page templates shared earlier.

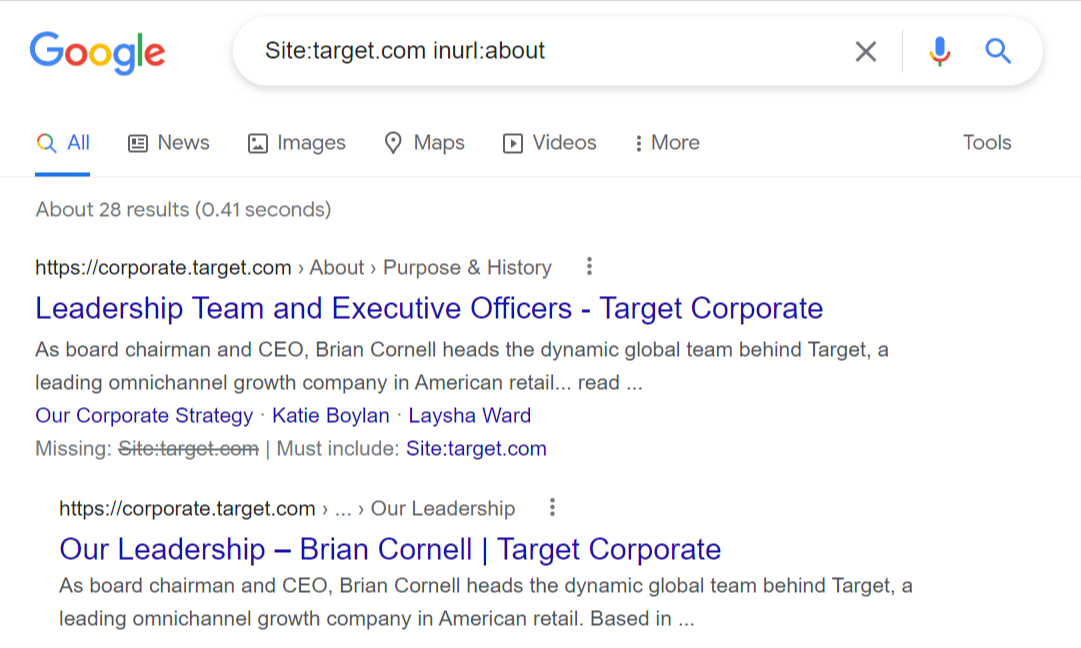

Run A Google Search

Working a website search will show you how to decide if the URL is in Google’s index.

First, verify the no-index and canonical tags. You wish to be certain that your canonicals are self-referencing and there’s no index tag on the web page.

Subsequent, go to Google search and enter – Website:yourdomain.com inurl:your url

Screenshot from seek for [Site:target.com inurl:], Google, September 2022

Screenshot from seek for [Site:target.com inurl:], Google, September 2022This screenshot reveals that Goal’s “About Us” web page is listed by Google.

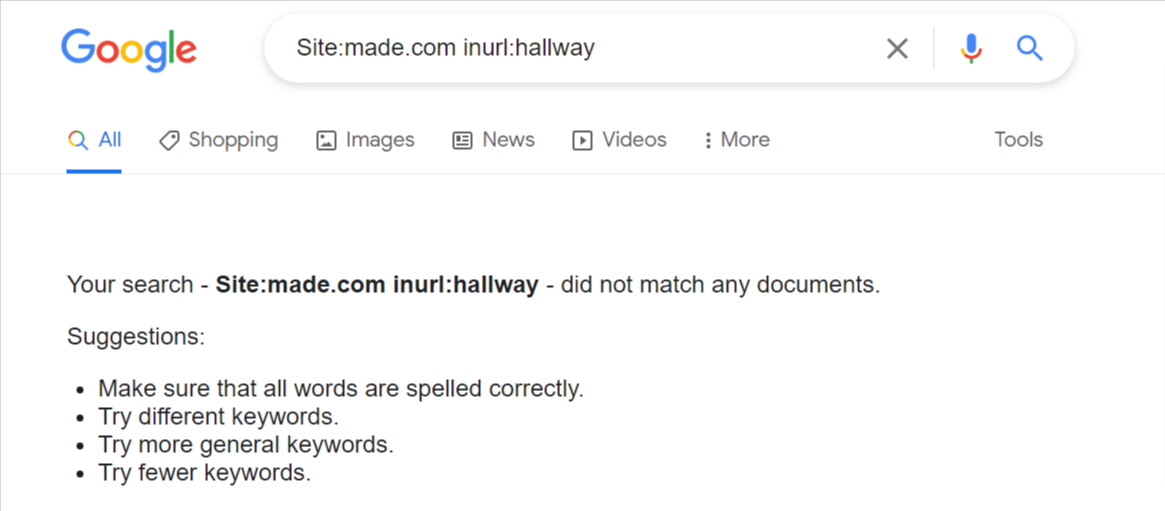

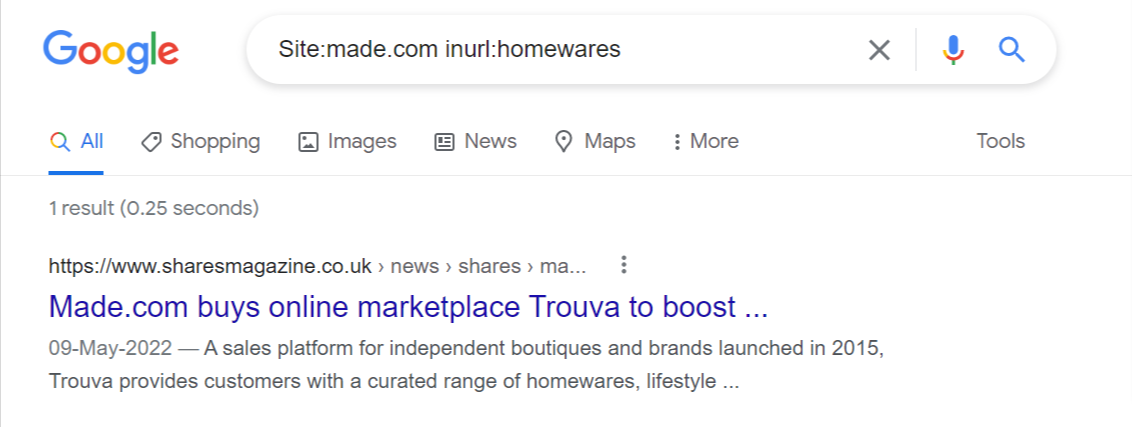

If there’s some situation along with your website’s JS, you’ll both not see this end result or get a end result that’s just like this, however Google is not going to have any meta info or something readable.

Screenshot from seek for [Site:made.com inurl:hallway], Google, September 2022

Screenshot from seek for [Site:made.com inurl:hallway], Google, September 2022 Screenshot from seek for [Site:made.com inurl:homewares], Google, September 2022

Screenshot from seek for [Site:made.com inurl:homewares], Google, September 2022Go For Content material Search

At instances, Google could index pages, however the content material is unreadable. This remaining check will show you how to assess if Google can learn your content material.

Collect a bunch of content material out of your web page templates and enter it on Google to see the outcomes.

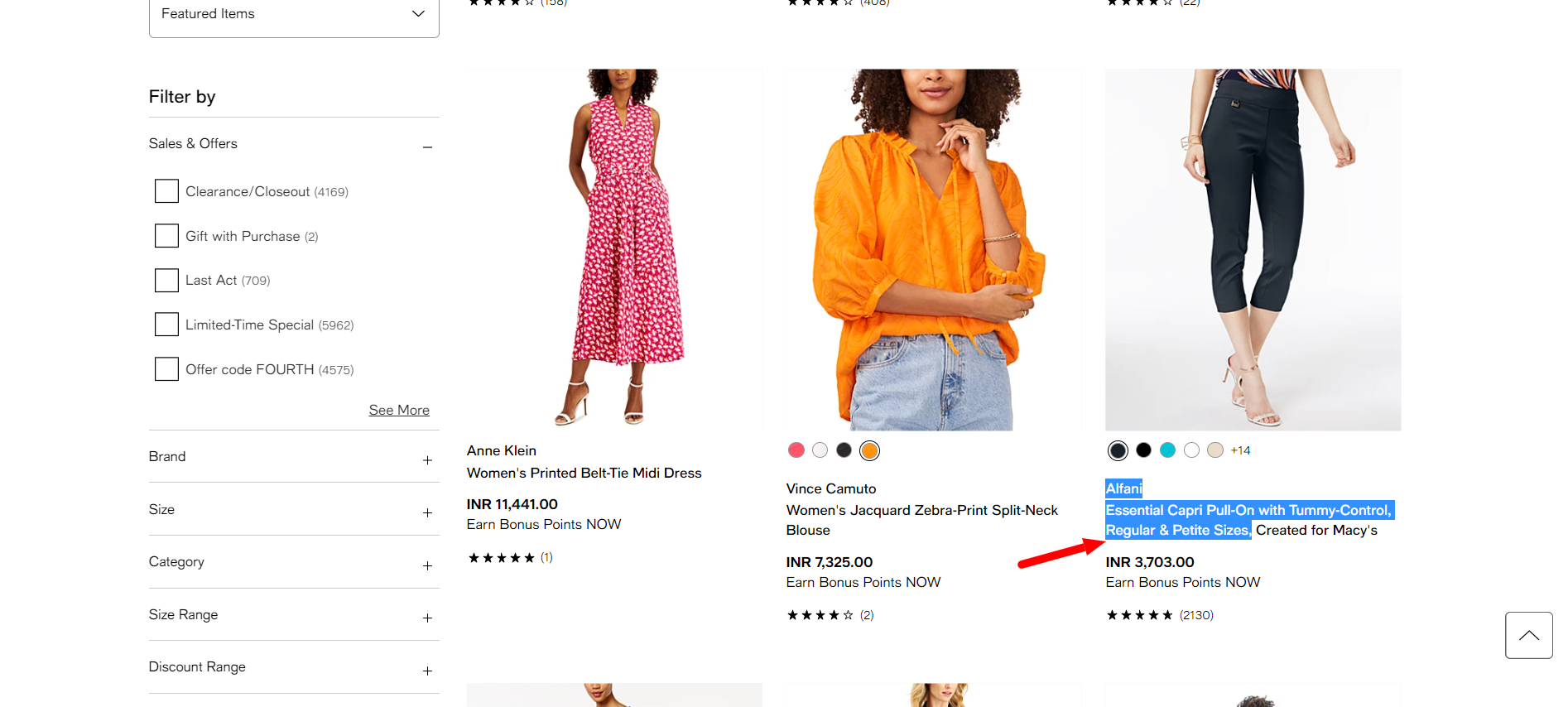

Let’s take some content material from Macy’s.

Screenshot from Macy’s, September 2022

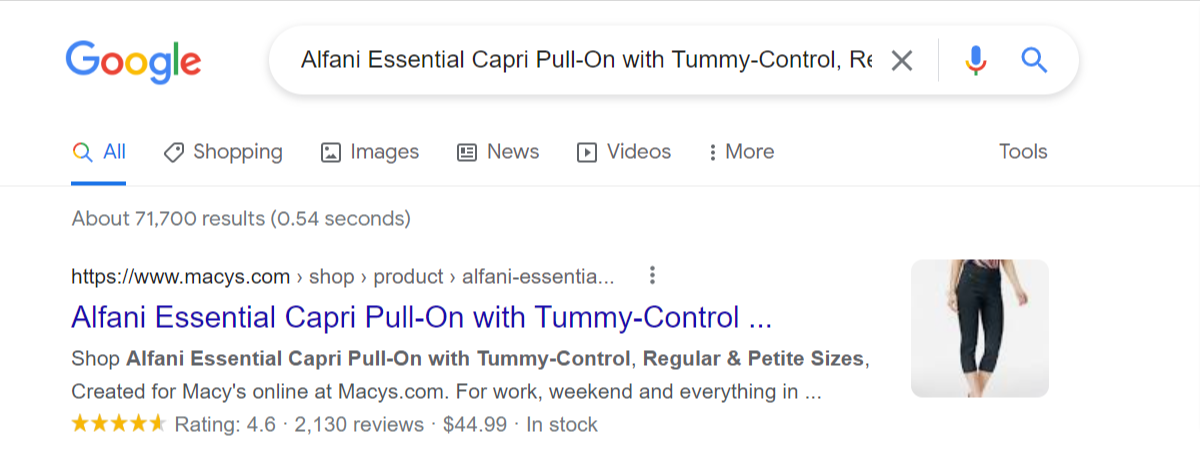

Screenshot from seek for [alfani essential capri pull-on with tummy control], Google, September 2022

Screenshot from seek for [alfani essential capri pull-on with tummy control], Google, September 2022No issues right here!

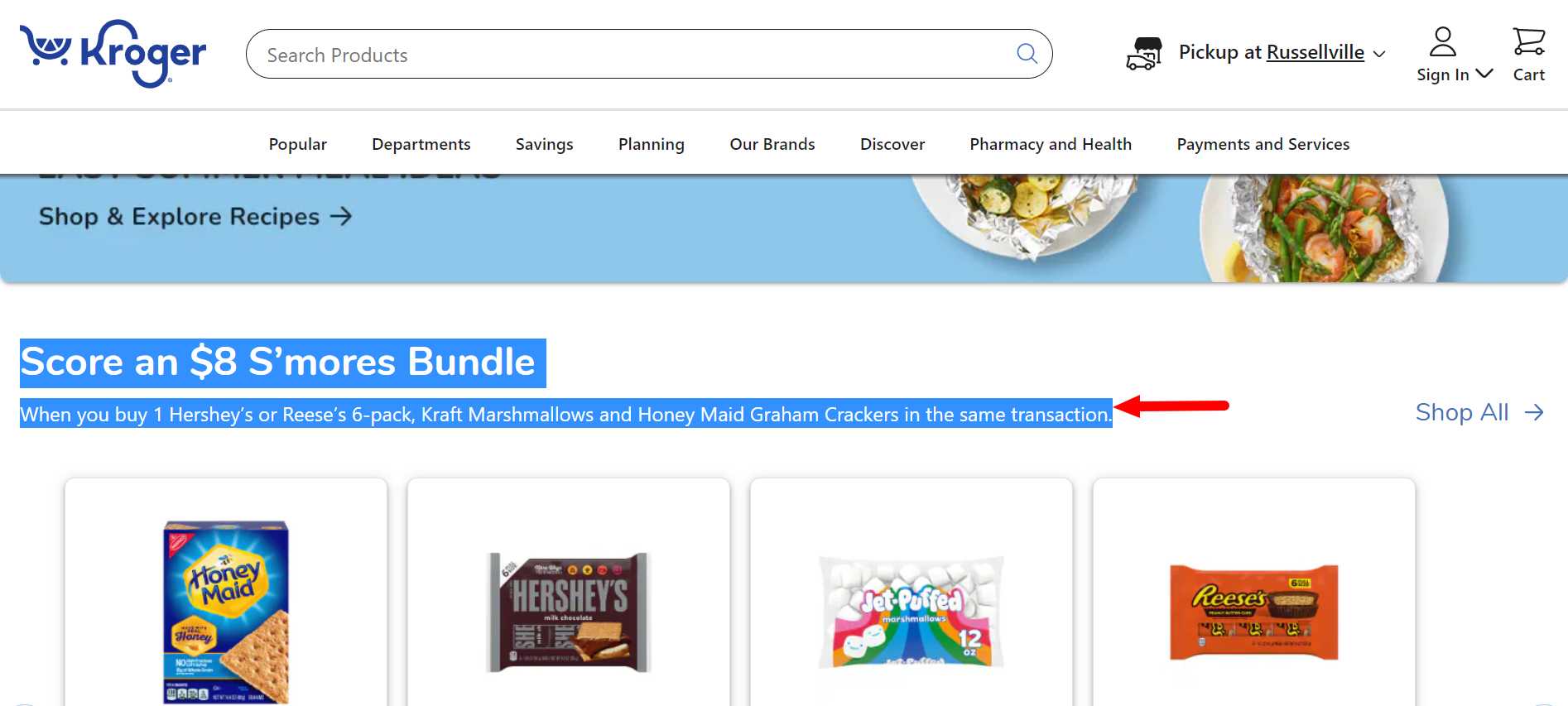

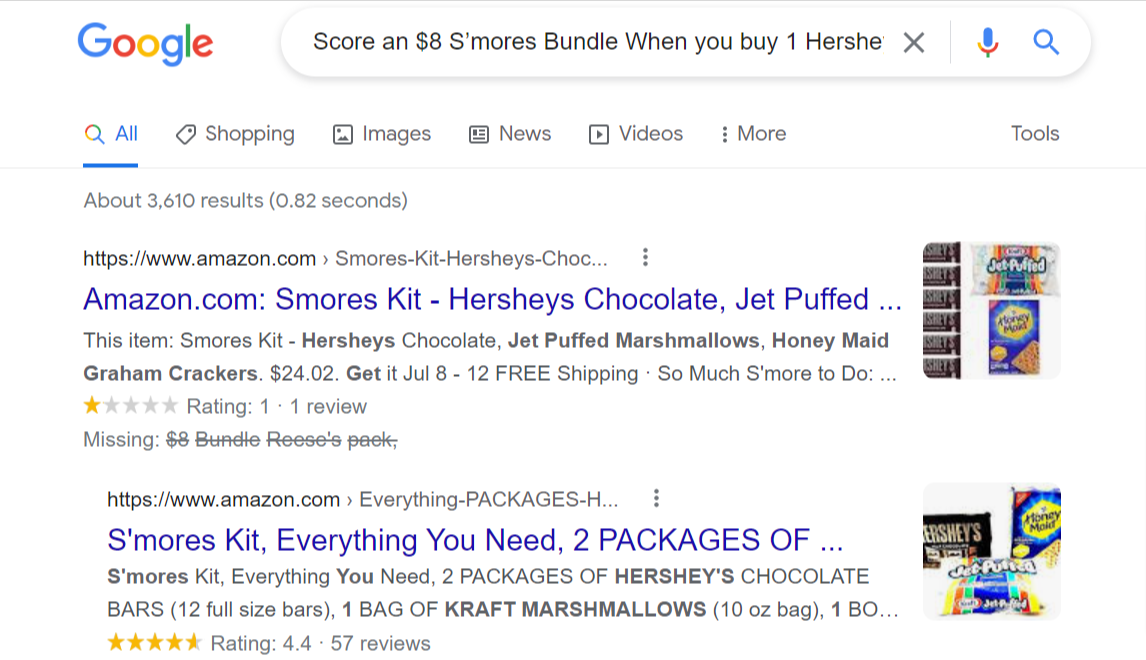

However take a look at what occurs with this content material on Kroger. It’s a nightmare!

Screenshot from Kruger, September 2022

Screenshot from Kruger, September 2022 Screenshot from seek for [score an $8 s’mores bunder when you buy 1 Hershey], Google, September 2022

Screenshot from seek for [score an $8 s’mores bunder when you buy 1 Hershey], Google, September 2022Although recognizing JavaScript Website positioning issues is extra advanced than this, these three assessments will show you how to shortly assess in case your ecommerce Javascript has Website positioning points.

Comply with these assessments with an in depth JS web site audit utilizing an Website positioning crawler that may assist establish in case your web site failed when executing JS, and if some code isn’t working correctly.

For example, a couple of Website positioning crawlers have an inventory of options that may show you how to perceive this intimately:

- The “JavaScript efficiency” report presents an inventory of all of the errors.

- The “browser efficiency occasions” chart reveals the time of lifecycle occasions when loading JS pages. It helps you establish the web page components which are the slowest to load.

- The “load time distribution” report reveals the pages which are quick or sluggish. Should you click on on these knowledge columns, you’ll be able to additional analyze the sluggish pages intimately.

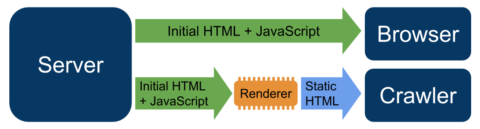

2. Implement Dynamic Rendering

How your web site renders code impacts how Google will index your JS content material. Therefore, you might want to understand how JavaScript rendering happens.

Server-Facet Rendering

On this, the rendered web page (rendering of pages occurs on the server) is shipped to the crawler or the browser (consumer). Crawling and indexing are just like HTML pages.

However implementing server-side rendering (SSR) is usually difficult for builders and may enhance server load.

Additional, the Time to First Byte (TTFB) is sluggish as a result of the server renders pages on the go.

One factor builders ought to bear in mind when implementing SSR is to chorus from utilizing capabilities working instantly within the DOM.

Shopper-Facet Rendering

Right here, the JavaScript is rendered by the consumer utilizing the DOM. This causes a number of computing points when search bots try and crawl, render, and index content material.

A viable different to SSR and CSR is dynamic rendering that switches between consumer and server-side rendered content material for particular person brokers.

It permits builders to ship the location’s content material to customers who entry it utilizing JS code generated within the browser.

Nevertheless, it presents solely a static model to the bots. Google formally helps implementing dynamic rendering.

Picture from Google Search Central, September 2022

Picture from Google Search Central, September 2022To deploy dynamic rendering, you should use instruments like Prerender.io or Puppeteer.

These might help you serve a static HTML model of your Javascript web site to the crawlers with none detrimental influence on CX.

Dynamic rendering is a superb resolution for ecommerce web sites that often maintain a lot of content material that change continuously or depend on social media sharing (containing embeddable social media partitions or widgets).

3. Route Your URLs Correctly

JavaScript frameworks use a router to map clear URLs. Therefore, it’s essential to replace web page URLs when updating content material.

For example, JS frameworks like Angular and Vue generate URLs with a hash (#) like www.instance.com/#/about-us

Such URLs are ignored by Google bots through the indexing course of. So, it isn’t advisable to make use of #.

As an alternative, use static-looking URLs like http://www.instance.com/about-us

4. Adhere To The Inside Linking Protocol

Inside hyperlinks assist Google effectively crawl the location and spotlight the essential pages.

A poor linking construction will be dangerous to Website positioning, particularly for JS-heavy websites.

One widespread situation we’ve encountered is when ecommerce websites use JS for hyperlinks that Google can’t crawl, equivalent to onclick or button-type hyperlinks.

Examine this out:

<a href=”/important-link”onclick=”changePage(‘important-link’)”>Crawl this</a>

If you need Google bots to find and observe your hyperlinks, guarantee they’re plain HTML.

Google recommends interlinking pages utilizing HTML anchor tags with href attributes and asks site owners to keep away from JS occasion handlers.

5. Use Pagination

Pagination is essential for JS-rich ecommerce web sites with hundreds of merchandise that retailers typically decide to unfold throughout a number of pages for higher UX.

Permitting customers to scroll infinitely could also be good for UX, however isn’t essentially Website positioning-friendly. It’s because bots don’t work together with such pages and can’t set off occasions to load extra content material.

Finally, Google will attain a restrict (cease scrolling) and depart. So, most of your content material will get ignored, leading to a poor rating.

Ensure you use <a href> hyperlinks to permit Google to see the second web page of pagination.

For example, use this:

<a href=”https://instance.com/sneakers/”>

6. Lazy Load Pictures

Although Google helps lazy loading, it doesn’t scroll via content material when visiting a web page.

It resizes the web page’s digital viewport, making it longer through the crawling course of. And since the “scroll” occasion listener isn’t triggered, this content material isn’t rendered.

Thus, you probably have photos under the fold, like most ecommerce web sites, it’s essential to lazy load them, permitting Google to see all of your content material.

7. Permit Bots To Crawl JS

This will appear apparent, however on a number of events, we’ve seen ecommerce websites by accident blocking JavaScript (.js) recordsdata from being crawled.

It will trigger JS Website positioning points, because the bots won’t be able to render and index that code.

Examine your robots.txt file to see if the JS recordsdata are open and accessible for crawling.

8. Audit Your JS Code

Lastly, make sure you audit your JavaScript code to optimize it for the major search engines.

Use instruments like Google Webmaster Instruments, Chrome Dev Instruments, and Ahrefs and an Website positioning crawler like JetOctopus to run a profitable JS Website positioning audit.

Google Search Console

This platform might help you optimize your website and monitor your natural efficiency. Use GSC to watch Googlebot and WRS exercise.

For JS web sites, GSC lets you see issues in rendering. It experiences crawl errors and points notifications for lacking JS components which were blocked for crawling.

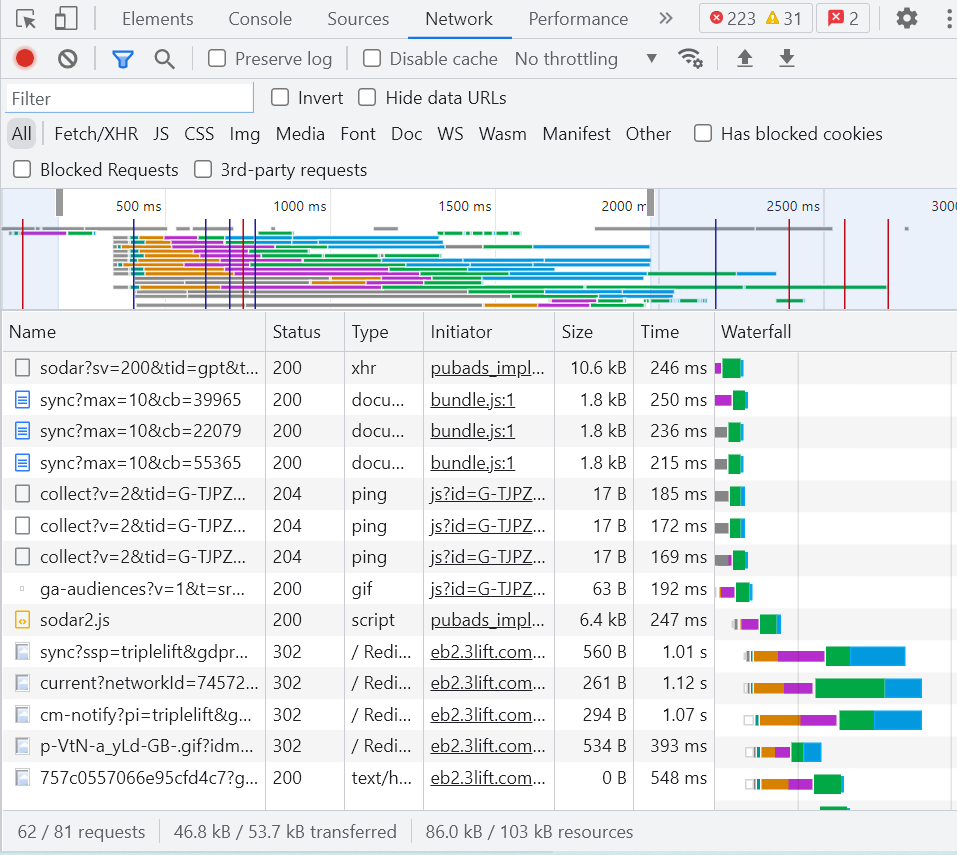

Chrome Dev Instruments

These net developer instruments are constructed into Chrome for ease of use.

The platform enables you to examine rendered HTML (or DOM) and the community exercise of your net pages.

From its Community tab, you’ll be able to simply establish the JS and CSS sources loaded earlier than the DOM.

Screenshot from Chrome Dev Instruments, September 2022

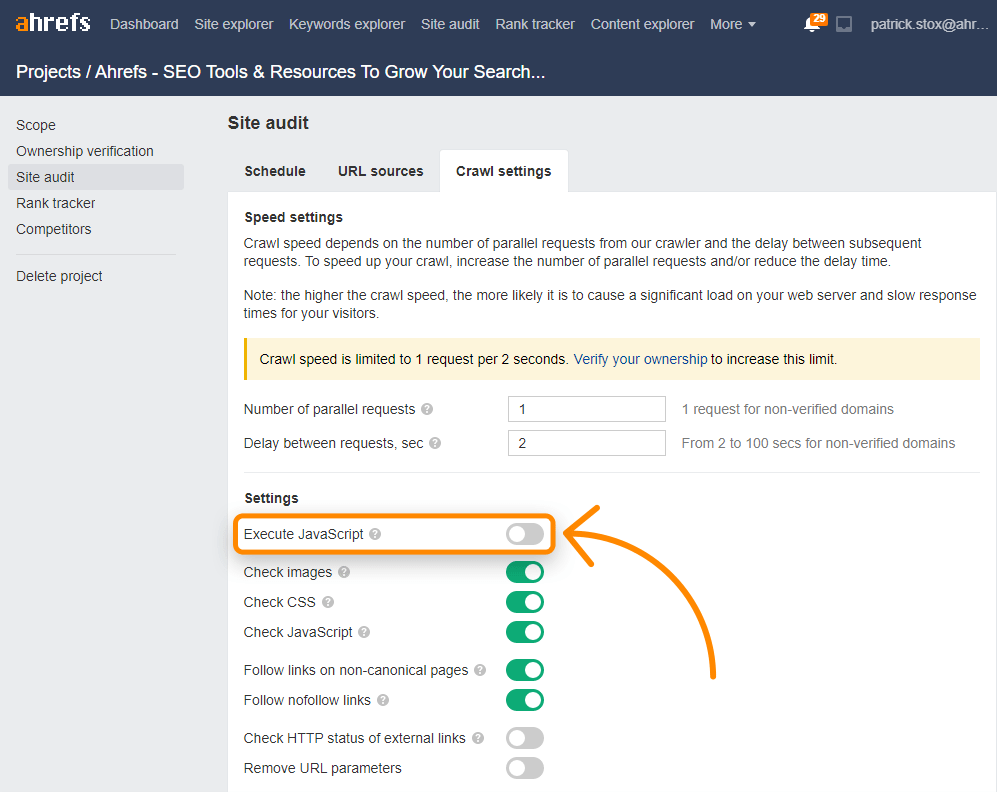

Screenshot from Chrome Dev Instruments, September 2022Ahrefs

Ahrefs lets you successfully handle backlink-building, content material audits, key phrase analysis, and extra. It may well render net pages at scale and lets you verify for JavaScript redirects.

You may as well allow JS in Website Audit crawls to unlock extra insights.

Screenshot from Ahrefs, September 2022

Screenshot from Ahrefs, September 2022The Ahrefs Toolbar helps JavaScript and reveals a comparability of HTML to rendered variations of tags.

JetOctopus Website positioning Crawler And Log Analyzer

JetOctopus is an Website positioning crawler and log analyzer that lets you effortlessly audit widespread ecommerce Website positioning points.

Since it could view and render JS as a Google bot, ecommerce entrepreneurs can remedy JavaScript Website positioning points at scale.

Its JS Efficiency tab presents complete insights into JavaScript execution – First Paint, First Contentful Paint, and web page load.

It additionally shares the time wanted to finish all JavaScript requests with the JS errors that want fast consideration.

GSC integration with JetOctopus might help you see the whole dynamics of your website efficiency.

Ryte UX Software

Ryte is one other software that’s able to crawling and checking your javascript pages. It can render the pages and verify for errors, serving to you troubleshoot points and verify the usability of your dynamic pages.

seoClarity

seoClarity is an enterprise platform with many options. Like the opposite instruments, it options dynamic rendering, letting you verify how the javascript in your web site performs.

Summing Up

Ecommerce websites are real-world examples of dynamic content material injected utilizing JS.

Therefore, ecommerce builders rave about how JS lets them create extremely interactive ecommerce pages.

However, many Website positioning professionals dread JS as a result of they’ve skilled declining natural visitors after their website began counting on client-side rendering.

Although each are proper, the actual fact is that JS-reliant web sites can also carry out nicely within the SERP.

Comply with the ideas shared on this information to get one step nearer to leveraging JavaScript in the simplest means potential whereas upholding your website’s rating within the SERP.

Extra sources:

Featured Picture: Visible Era/Shutterstock