Firms as we speak are incorporating synthetic intelligence into each nook of their enterprise. The pattern is anticipated to proceed till machine-learning fashions are included into a lot of the services we work together with day by day.

As these fashions turn into a much bigger a part of our lives, making certain their integrity turns into extra essential. That’s the mission of Verta, a startup that spun out of MIT’s Laptop Science and Synthetic Intelligence Laboratory (CSAIL).

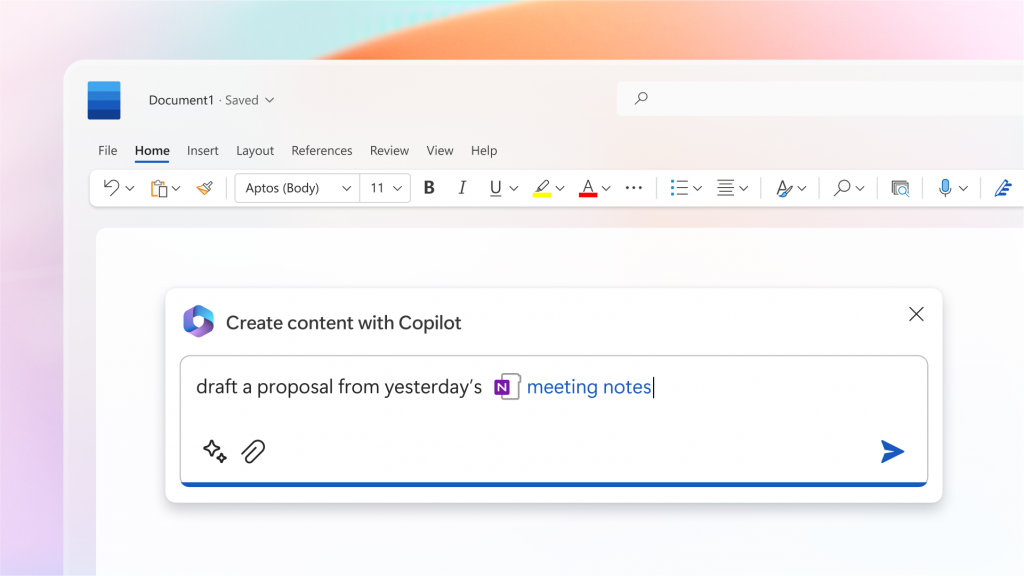

Verta’s platform helps corporations deploy, monitor, and handle machine-learning fashions safely and at scale. Information scientists and engineers can use Verta’s instruments to trace totally different variations of fashions, audit them for bias, check them earlier than deployment, and monitor their efficiency in the actual world.

“Every little thing we do is to allow extra merchandise to be constructed with AI, and to do this safely,” Verta founder and CEO Manasi Vartak SM ’14, PhD ’18 says. “We’re already seeing with ChatGPT how AI can be utilized to generate information, artefacts — you title it — that look right however aren’t right. There must be extra governance and management in how AI is getting used, notably for enterprises offering AI options.”

Verta is presently working with giant corporations in well being care, finance, and insurance coverage to assist them perceive and audit their fashions’ suggestions and predictions. It’s additionally working with quite a few high-growth tech corporations trying to velocity up deployment of latest, AI-enabled options whereas making certain these options are used appropriately.

Vartak says the corporate has been capable of lower the time it takes prospects to deploy AI fashions by orders of magnitude whereas making certain these fashions are explainable and honest — an particularly essential issue for corporations in extremely regulated industries.

Well being care corporations, for instance, can use Verta to enhance AI-powered affected person monitoring and therapy suggestions. Such programs should be totally vetted for errors and biases earlier than they’re used on sufferers.

“Whether or not it’s bias or equity or explainability, it goes again to our philosophy on mannequin governance and administration,” Vartak says. “We consider it like a preflight guidelines: Earlier than an airplane takes off, there’s a set of checks you’ll want to do earlier than you get your airplane off the bottom. It’s comparable with AI fashions. You could be sure to’ve executed your bias checks, you’ll want to ensure there’s some stage of explainability, you’ll want to ensure your mannequin is reproducible. We assist with all of that.”

From venture to product

Earlier than coming to MIT, Vartak labored as an information scientist for a social media firm. In a single venture, after spending weeks tuning machine-learning fashions that curated content material to indicate in individuals’s feeds, she realized an ex-employee had already executed the identical factor. Sadly, there was no report of what they did or the way it affected the fashions.

For her PhD at MIT, Vartak determined to construct instruments to assist information scientists develop, check, and iterate on machine-learning fashions. Working in CSAIL’s Database Group, Vartak recruited a staff of graduate college students and individuals in MIT’s Undergraduate Analysis Alternatives Program (UROP).

“Verta wouldn’t exist with out my work at MIT and MIT’s ecosystem,” Vartak says. “MIT brings collectively individuals on the slicing fringe of tech and helps us construct the subsequent technology of instruments.”

The staff labored with information scientists within the CSAIL Alliances program to resolve what options to construct and iterated based mostly on suggestions from these early adopters. Vartak says the ensuing venture, named ModelDB, was the primary open-source mannequin administration system.

Vartak additionally took a number of enterprise lessons on the MIT Sloan Faculty of Administration throughout her PhD and labored with classmates on startups that really useful clothes and tracked well being, spending numerous hours within the Martin Belief Middle for MIT Entrepreneurship and taking part within the middle’s delta v summer season accelerator.

“What MIT permits you to do is take dangers and fail in a protected setting,” Vartak says. “MIT afforded me these forays into entrepreneurship and confirmed me the right way to go about constructing merchandise and discovering first prospects, so by the point Verta got here round I had executed it on a smaller scale.”

ModelDB helped information scientists practice and monitor fashions, however Vartak shortly noticed the stakes have been increased as soon as fashions have been deployed at scale. At that time, making an attempt to enhance (or unintentionally breaking) fashions can have main implications for corporations and society. That perception led Vartak to start constructing Verta.

“At Verta, we assist handle fashions, assist run fashions, and ensure they’re working as anticipated, which we name mannequin monitoring,” Vartak explains. “All of these items have their roots again to MIT and my thesis work. Verta actually advanced from my PhD venture at MIT.”

Verta’s platform helps corporations deploy fashions extra shortly, guarantee they proceed working as supposed over time, and handle the fashions for compliance and governance. Information scientists can use Verta to trace totally different variations of fashions and perceive how they have been constructed, answering questions like how information have been used and which explainability or bias checks have been run. They’ll additionally vet them by working them by deployment checklists and safety scans.

“Verta’s platform takes the info science mannequin and provides half a dozen layers to it to remodel it into one thing you should use to energy, say, a complete suggestion system in your web site,” Vartak says. “That features efficiency optimizations, scaling, and cycle time, which is how shortly you may take a mannequin and switch it right into a helpful product, in addition to governance.”

Supporting the AI wave

Vartak says giant corporations typically use 1000’s of various fashions that affect practically each a part of their operations.

“An insurance coverage firm, for instance, will use fashions for every thing from underwriting to claims, back-office processing, advertising and marketing, and gross sales,” Vartak says. “So, the variety of fashions is actually excessive, there’s a big quantity of them, and the extent of scrutiny and compliance corporations want round these fashions are very excessive. They should know issues like: Did you employ the info you have been supposed to make use of? Who have been the individuals who vetted it? Did you run explainability checks? Did you run bias checks?”

Vartak says corporations that don’t undertake AI shall be left behind. The businesses that trip AI to success, in the meantime, will want well-defined processes in place to handle their ever-growing checklist of fashions.

“Within the subsequent 10 years, each system we work together with goes to have intelligence in-built, whether or not it’s a toaster or your e mail applications, and it’s going to make your life a lot, a lot simpler,” Vartak says. “What’s going to allow that intelligence are higher fashions and software program, like Verta, that enable you combine AI into all of those functions in a short time.”