Globalized know-how has the potential to create large-scale societal influence, and having a grounded analysis method rooted in current worldwide human and civil rights requirements is a essential part to assuring accountable and moral AI improvement and deployment. The Influence Lab staff, a part of Google’s Accountable AI Group, employs a spread of interdisciplinary methodologies to make sure essential and wealthy evaluation of the potential implications of know-how improvement. The staff’s mission is to look at socioeconomic and human rights impacts of AI, publish foundational analysis, and incubate novel mitigations enabling machine studying (ML) practitioners to advance world fairness. We research and develop scalable, rigorous, and evidence-based options utilizing information evaluation, human rights, and participatory frameworks.

The distinctiveness of the Influence Lab’s objectives is its multidisciplinary method and the range of expertise, together with each utilized and tutorial analysis. Our goal is to broaden the epistemic lens of Accountable AI to heart the voices of traditionally marginalized communities and to beat the apply of ungrounded evaluation of impacts by providing a research-based method to know how differing views and experiences ought to influence the event of know-how.

What we do

In response to the accelerating complexity of ML and the elevated coupling between large-scale ML and other people, our staff critically examines conventional assumptions of how know-how impacts society to deepen our understanding of this interaction. We collaborate with tutorial students within the areas of social science and philosophy of know-how and publish foundational analysis specializing in how ML might be useful and helpful. We additionally provide analysis assist to a few of our group’s most difficult efforts, together with the 1,000 Languages Initiative and ongoing work within the testing and analysis of language and generative fashions. Our work offers weight to Google’s AI Ideas.

To that finish, we:

- Conduct foundational and exploratory analysis in the direction of the objective of making scalable socio-technical options

- Create datasets and research-based frameworks to guage ML methods

- Outline, determine, and assess unfavourable societal impacts of AI

- Create accountable options to information assortment used to construct massive fashions

- Develop novel methodologies and approaches that assist accountable deployment of ML fashions and methods to make sure security, equity, robustness, and person accountability

- Translate exterior group and skilled suggestions into empirical insights to raised perceive person wants and impacts

- Search equitable collaboration and attempt for mutually useful partnerships

We try not solely to reimagine current frameworks for assessing the adversarial influence of AI to reply formidable analysis questions, but in addition to advertise the significance of this work.

Present analysis efforts

Understanding social issues

Our motivation for offering rigorous analytical instruments and approaches is to make sure that social-technical influence and equity is effectively understood in relation to cultural and historic nuances. That is fairly vital, because it helps develop the inducement and skill to raised perceive communities who expertise the best burden and demonstrates the worth of rigorous and centered evaluation. Our objectives are to proactively accomplice with exterior thought leaders on this downside area, reframe our current psychological fashions when assessing potential harms and impacts, and keep away from counting on unfounded assumptions and stereotypes in ML applied sciences. We collaborate with researchers at Stanford, College of California Berkeley, College of Edinburgh, Mozilla Basis, College of Michigan, Naval Postgraduate Faculty, Knowledge & Society, EPFL, Australian Nationwide College, and McGill College.

|

| We look at systemic social points and generate helpful artifacts for accountable AI improvement. |

Centering underrepresented voices

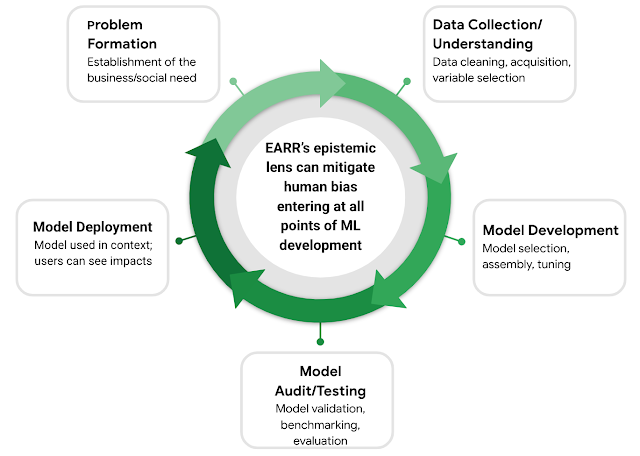

We additionally developed the Equitable AI Analysis Roundtable (EARR), a novel community-based analysis coalition created to determine ongoing partnerships with exterior nonprofit and analysis group leaders who’re fairness consultants within the fields of training, regulation, social justice, AI ethics, and financial improvement. These partnerships provide the chance to have interaction with multi-disciplinary consultants on advanced analysis questions associated to how we heart and perceive fairness utilizing classes from different domains. Our companions embrace PolicyLink; The Schooling Belief – West; Notley; Partnership on AI; Othering and Belonging Institute at UC Berkeley; The Michelson Institute for Mental Property, HBCU IP Futures Collaborative at Emory College; Heart for Info Know-how Analysis within the Curiosity of Society (CITRIS) on the Banatao Institute; and the Charles A. Dana Heart on the College of Texas, Austin. The objectives of the EARR program are to: (1) heart data in regards to the experiences of traditionally marginalized or underrepresented teams, (2) qualitatively perceive and determine potential approaches for finding out social harms and their analogies inside the context of know-how, and (3) broaden the lens of experience and related data because it pertains to our work on accountable and protected approaches to AI improvement.

By way of semi-structured workshops and discussions, EARR has supplied essential views and suggestions on the way to conceptualize fairness and vulnerability as they relate to AI know-how. We have now partnered with EARR contributors on a spread of subjects from generative AI, algorithmic choice making, transparency, and explainability, with outputs starting from adversarial queries to frameworks and case research. Definitely the method of translating analysis insights throughout disciplines into technical options just isn’t all the time simple however this analysis has been a rewarding partnership. We current our preliminary analysis of this engagement in this paper.

|

| EARR: Parts of the ML improvement life cycle by which multidisciplinary data is essential for mitigating human biases. |

Grounding in civil and human rights values

In partnership with our Civil and Human Rights Program, our analysis and evaluation course of is grounded in internationally acknowledged human rights frameworks and requirements together with the Common Declaration of Human Rights and the UN Guiding Ideas on Enterprise and Human Rights. Using civil and human rights frameworks as a place to begin permits for a context-specific method to analysis that takes under consideration how a know-how will probably be deployed and its group impacts. Most significantly, a rights-based method to analysis permits us to prioritize conceptual and utilized strategies that emphasize the significance of understanding essentially the most weak customers and essentially the most salient harms to raised inform day-to-day choice making, product design and long-term methods.

Ongoing work

Social context to help in dataset improvement and analysis

We search to make use of an method to dataset curation, mannequin improvement and analysis that’s rooted in fairness and that avoids expeditious however probably dangerous approaches, akin to using incomplete information or not contemplating the historic and social cultural components associated to a dataset. Accountable information assortment and evaluation requires an extra degree of cautious consideration of the context by which the information are created. For instance, one may even see variations in outcomes throughout demographic variables that will probably be used to construct fashions and may query the structural and system-level components at play as some variables might finally be a reflection of historic, social and political components. Through the use of proxy information, akin to race or ethnicity, gender, or zip code, we’re systematically merging collectively the lived experiences of a complete group of various folks and utilizing it to coach fashions that may recreate and keep dangerous and inaccurate character profiles of total populations. Crucial information evaluation additionally requires a cautious understanding that correlations or relationships between variables don’t indicate causation; the affiliation we witness is usually precipitated by extra a number of variables.

Relationship between social context and mannequin outcomes

Constructing on this expanded and nuanced social understanding of knowledge and dataset development, we additionally method the issue of anticipating or ameliorating the influence of ML fashions as soon as they’ve been deployed to be used in the actual world. There are myriad methods by which the usage of ML in numerous contexts — from training to well being care — has exacerbated current inequity as a result of the builders and decision-making customers of those methods lacked the related social understanding, historic context, and didn’t contain related stakeholders. This can be a analysis problem for the sphere of ML usually and one that’s central to our staff.

Globally accountable AI centering group consultants

Our staff additionally acknowledges the saliency of understanding the socio-technical context globally. In keeping with Google’s mission to “manage the world’s data and make it universally accessible and helpful”, our staff is partaking in analysis partnerships globally. For instance, we’re collaborating with The Pure Language Processing staff and the Human Centered staff within the Makerere Synthetic Intelligence Lab in Uganda to analysis cultural and language nuances as they relate to language mannequin improvement.

Conclusion

We proceed to deal with the impacts of ML fashions deployed in the actual world by conducting additional socio-technical analysis and interesting exterior consultants who’re additionally a part of the communities which might be traditionally and globally disenfranchised. The Influence Lab is happy to supply an method that contributes to the event of options for utilized issues by way of the utilization of social-science, analysis, and human rights epistemologies.

Acknowledgements

We wish to thank every member of the Influence Lab staff — Jamila Smith-Loud, Andrew Sensible, Jalon Corridor, Darlene Neal, Amber Ebinama, and Qazi Mamunur Rashid — for all of the exhausting work they do to make sure that ML is extra accountable to its customers and society throughout communities and world wide.