Giant neural networks are on the core of many latest advances in AI, however coaching them is a troublesome engineering and analysis problem which requires orchestrating a cluster of GPUs to carry out a single synchronized calculation. As cluster and mannequin sizes have grown, machine studying practitioners have developed an rising number of strategies to parallelize mannequin coaching over many GPUs. At first look, understanding these parallelism strategies could seem daunting, however with just a few assumptions in regards to the construction of the computation these strategies grow to be rather more clear—at that time, you are simply shuttling round opaque bits from A to B like a community change shuttles round packets.

Knowledge Parallelism

Pipeline Parallelism

Tensor Parallelism

Professional Parallelism

Knowledge Parallelism

Pipeline Parallelism

Tensor Parallelism

Professional Parallelism

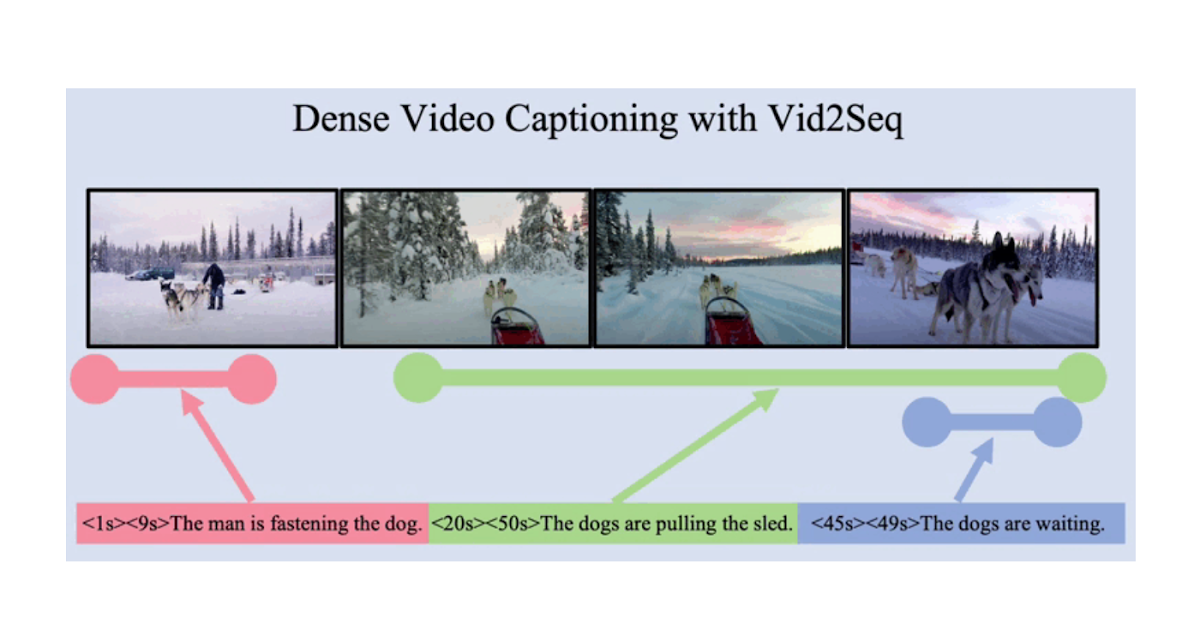

An illustration of varied parallelism methods on a three-layer mannequin. Every coloration refers to 1 layer and dashed strains separate totally different GPUs.

No Parallelism

Coaching a neural community is an iterative course of. In each iteration, we do a move ahead by means of a mannequin’s layers to compute an output for every coaching instance in a batch of knowledge. Then one other move proceeds backward by means of the layers, propagating how a lot every parameter impacts the ultimate output by computing a gradient with respect to every parameter. The typical gradient for the batch, the parameters, and a few per-parameter optimization state is handed to an optimization algorithm, reminiscent of Adam, which computes the subsequent iteration’s parameters (which ought to have barely higher efficiency in your information) and new per-parameter optimization state. Because the coaching iterates over batches of knowledge, the mannequin evolves to provide more and more correct outputs.

Numerous parallelism strategies slice this coaching course of throughout totally different dimensions, together with:

- Knowledge parallelism—run totally different subsets of the batch on totally different GPUs;

- Pipeline parallelism—run totally different layers of the mannequin on totally different GPUs;

- Tensor parallelism—break up the mathematics for a single operation reminiscent of a matrix multiplication to be cut up throughout GPUs;

- Combination-of-Specialists—course of every instance by solely a fraction of every layer.

(On this publish, we’ll assume that you’re utilizing GPUs to coach your neural networks, however the identical concepts apply to these utilizing every other neural community accelerator.)

Knowledge Parallelism

Knowledge Parallel coaching means copying the identical parameters to a number of GPUs (usually referred to as “employees”) and assigning totally different examples to every to be processed concurrently. Knowledge parallelism alone nonetheless requires that your mannequin matches right into a single GPU’s reminiscence, however allows you to make the most of the compute of many GPUs at the price of storing many duplicate copies of your parameters. That being stated, there are methods to extend the efficient RAM out there to your GPU, reminiscent of quickly offloading parameters to CPU reminiscence between usages.

As every information parallel employee updates its copy of the parameters, they should coordinate to make sure that every employee continues to have related parameters. The best method is to introduce blocking communication between employees: (1) independently compute the gradient on every employee; (2) common the gradients throughout employees; and (3) independently compute the identical new parameters on every employee. Step (2) is a blocking common which requires transferring various information (proportional to the variety of employees instances the dimensions of your parameters), which may damage your coaching throughput. There are numerous asynchronous synchronization schemes to take away this overhead, however they damage studying effectivity; in observe, individuals usually keep on with the synchronous method.

Pipeline Parallelism

With Pipeline Parallel coaching, we partition sequential chunks of the mannequin throughout GPUs. Every GPU holds solely a fraction of parameters, and thus the identical mannequin consumes proportionally much less reminiscence per GPU.

It’s simple to separate a big mannequin into chunks of consecutive layers. Nonetheless, there’s a sequential dependency between inputs and outputs of layers, so a naive implementation can result in a considerable amount of idle time whereas a employee waits for outputs from the earlier machine for use as its inputs. These ready time chunks are referred to as “bubbles,” losing the computation that could possibly be accomplished by the idling machines.

Ahead

Backward

Gradient replace

Idle

Illustration of a naive pipeline parallelism setup the place the mannequin is vertically cut up into 4 partitions by layer. Employee 1 hosts mannequin parameters of the primary layer of the community (closest to the enter), whereas employee 4 hosts layer 4 (which is closest to the output). “F”, “B”, and “U” signify ahead, backward and replace operations, respectively. The subscripts point out on which employee an operation runs. Knowledge is processed by one employee at a time because of the sequential dependency, resulting in giant “bubbles” of idle time.

We are able to reuse the concepts from information parallelism to scale back the price of the bubble by having every employee solely course of a subset of knowledge parts at one time, permitting us to cleverly overlap new computation with wait time. The core concept is to separate one batch into a number of microbatches; every microbatch needs to be proportionally quicker to course of and every employee begins engaged on the subsequent microbatch as quickly because it’s out there, thus expediting the pipeline execution. With sufficient microbatches the employees will be utilized more often than not with a minimal bubble initially and finish of the step. Gradients are averaged throughout microbatches, and updates to the parameters occur solely as soon as all microbatches have been accomplished.

The variety of employees that the mannequin is cut up over is usually referred to as pipeline depth.

In the course of the ahead move, employees solely must ship the output (referred to as activations) of its chunk of layers to the subsequent employee; through the backward move, it solely sends the gradients on these activations to the earlier employee. There’s a giant design area of methods to schedule these passes and methods to combination the gradients throughout microbatches. GPipe has every employee course of ahead and backward passes consecutively after which aggregates gradients from a number of microbatches synchronously on the finish. PipeDream as a substitute schedules every employee to alternatively course of ahead and backward passes.

Ahead

Backward

Replace

Idle

GPipe

PipeDream

Comparability of GPipe and PipeDream pipelining schemes, utilizing 4 microbatches per batch. Microbatches 1-8 correspond to 2 consecutive information batches. Within the picture, “(quantity)” signifies on which microbatch an operation is carried out and the subscript marks the employee ID. Notice that PipeDream will get extra effectivity by performing some computations with stale parameters.

Tensor Parallelism

Pipeline parallelism splits a mannequin “vertically” by layer. It is also potential to “horizontally” cut up sure operations inside a layer, which is normally referred to as Tensor Parallel coaching. For a lot of trendy fashions (such because the Transformer), the computation bottleneck is multiplying an activation batch matrix with a big weight matrix. Matrix multiplication will be considered dot merchandise between pairs of rows and columns; it is potential to compute impartial dot merchandise on totally different GPUs, or to compute components of every dot product on totally different GPUs and sum up the outcomes. With both technique, we will slice the load matrix into even-sized “shards”, host every shard on a distinct GPU, and use that shard to compute the related a part of the general matrix product earlier than later speaking to mix the outcomes.

One instance is Megatron-LM, which parallelizes matrix multiplications throughout the Transformer’s self-attention and MLP layers. PTD-P makes use of tensor, information, and pipeline parallelism; its pipeline schedule assigns a number of non-consecutive layers to every machine, decreasing bubble overhead at the price of extra community communication.

Typically the enter to the community will be parallelized throughout a dimension with a excessive diploma of parallel computation relative to cross-communication. Sequence parallelism is one such concept, the place an enter sequence is cut up throughout time into a number of sub-examples, proportionally lowering peak reminiscence consumption by permitting the computation to proceed with extra granularly-sized examples.

Combination-of-Specialists (MoE)

With the Combination-of-Specialists (MoE) method, solely a fraction of the community is used to compute the output for anybody enter. One instance method is to have many units of weights and the community can select which set to make use of through a gating mechanism at inference time. This permits many extra parameters with out elevated computation value. Every set of weights is known as “specialists,” within the hope that the community will study to assign specialised computation and abilities to every skilled. Completely different specialists will be hosted on totally different GPUs, offering a transparent approach to scale up the variety of GPUs used for a mannequin.

Illustration of a mixture-of-experts (MoE) layer. Solely 2 out of the n specialists are chosen by the gating community. (Picture tailored from: Shazeer et al., 2017)

GShard scales an MoE Transformer as much as 600 billion parameters with a scheme the place solely the MoE layers are cut up throughout a number of TPU gadgets and different layers are absolutely duplicated. Swap Transformer scales mannequin measurement to trillions of parameters with even larger sparsity by routing one enter to a single skilled.

Different Reminiscence Saving Designs

There are a lot of different computational methods to make coaching more and more giant neural networks extra tractable. For instance:

-

To compute the gradient, you want to have saved the unique activations, which may eat quite a lot of machine RAM. Checkpointing (often known as activation recomputation) shops any subset of activations, and recomputes the intermediate ones just-in-time through the backward move. This protects quite a lot of reminiscence on the computational value of at most one further full ahead move. One also can frequently commerce off between compute and reminiscence value by selective activation recomputation, which is checkpointing subsets of the activations which might be comparatively dearer to retailer however cheaper to compute.

-

Combined Precision Coaching is to coach fashions utilizing lower-precision numbers (mostly FP16). Trendy accelerators can attain a lot larger FLOP counts with lower-precision numbers, and also you additionally save on machine RAM. With correct care, the ensuing mannequin can lose nearly no accuracy.

-

Offloading is to quickly offload unused information to the CPU or amongst totally different gadgets and later learn it again when wanted. Naive implementations will decelerate coaching lots, however subtle implementations will pre-fetch information in order that the machine by no means wants to attend on it. One implementation of this concept is ZeRO which splits the parameters, gradients, and optimizer states throughout all out there {hardware} and materializes them as wanted.

-

Reminiscence Environment friendly Optimizers have been proposed to scale back the reminiscence footprint of the operating state maintained by the optimizer, reminiscent of Adafactor.

-

Compression additionally can be utilized for storing intermediate leads to the community. For instance, Gist compresses activations which might be saved for the backward move; DALL·E compresses the gradients earlier than synchronizing them.

At OpenAI, we’re coaching and bettering giant fashions from the underlying infrastructure all the best way to deploying them for real-world issues. Should you’d prefer to put the concepts from this publish into observe—particularly related for our Scaling and Utilized Analysis groups—we’re hiring!