SEO, in its most simple sense, depends upon one factor above all others: Search engine spiders crawling and indexing your website.

However practically each web site goes to have pages that you simply don’t wish to embody on this exploration.

For instance, do you really need your privateness coverage or inner search pages exhibiting up in Google outcomes?

In a best-case state of affairs, these are doing nothing to drive visitors to your website actively, and in a worst-case, they might be diverting visitors from extra essential pages.

Fortunately, Google permits site owners to inform search engine bots what pages and content material to crawl and what to disregard. There are a number of methods to do that, the most typical being utilizing a robots.txt file or the meta robots tag.

We now have a wonderful and detailed clarification of the ins and outs of robots.txt, which it’s best to undoubtedly learn.

However in high-level phrases, it’s a plain textual content file that lives in your web site’s root and follows the Robots Exclusion Protocol (REP).

Robots.txt supplies crawlers with directions in regards to the website as a complete, whereas meta robots tags embody instructions for particular pages.

Some meta robots tags you would possibly make use of embody index, which tells serps so as to add the web page to their index; noindex, which tells it to not add a web page to the index or embody it in search outcomes; observe, which instructs a search engine to observe the hyperlinks on a web page; nofollow, which tells it to not observe hyperlinks, and a complete host of others.

Each robots.txt and meta robots tags are helpful instruments to maintain in your toolbox, however there’s additionally one other approach to instruct search engine bots to noindex or nofollow: the X-Robots-Tag.

What Is The X-Robots-Tag?

The X-Robots-Tag is one other method so that you can management how your webpages are crawled and listed by spiders. As a part of the HTTP header response to a URL, it controls indexing for a complete web page, in addition to the precise components on that web page.

And whereas utilizing meta robots tags is pretty easy, the X-Robots-Tag is a little more difficult.

However this, in fact, raises the query:

When Ought to You Use The X-Robots-Tag?

In response to Google, “Any directive that can be utilized in a robots meta tag can be specified as an X-Robots-Tag.”

Whilst you can set robots.txt-related directives within the headers of an HTTP response with each the meta robots tag and X-Robots Tag, there are particular conditions the place you’d wish to use the X-Robots-Tag – the 2 commonest being when:

- You wish to management how your non-HTML recordsdata are being crawled and listed.

- You wish to serve directives site-wide as a substitute of on a web page degree.

For instance, if you wish to block a selected picture or video from being crawled – the HTTP response technique makes this straightforward.

The X-Robots-Tag header can also be helpful as a result of it lets you mix a number of tags inside an HTTP response or use a comma-separated listing of directives to specify directives.

Perhaps you don’t need a sure web page to be cached and need it to be unavailable after a sure date. You need to use a mixture of “noarchive” and “unavailable_after” tags to instruct search engine bots to observe these directions.

Basically, the ability of the X-Robots-Tag is that it’s rather more versatile than the meta robots tag.

The benefit of utilizing an X-Robots-Tag with HTTP responses is that it lets you use common expressions to execute crawl directives on non-HTML, in addition to apply parameters on a bigger, international degree.

That will help you perceive the distinction between these directives, it’s useful to categorize them by sort. That’s, are they crawler directives or indexer directives?

Right here’s a helpful cheat sheet to clarify:

| Crawler Directives | Indexer Directives |

| Robots.txt – makes use of the consumer agent, enable, disallow, and sitemap directives to specify the place on-site search engine bots are allowed to crawl and never allowed to crawl. | Meta Robots tag – lets you specify and stop serps from exhibiting explicit pages on a website in search outcomes.

Nofollow – lets you specify hyperlinks that ought to not cross on authority or PageRank. X-Robots-tag – lets you management how specified file sorts are listed. |

The place Do You Put The X-Robots-Tag?

Let’s say you wish to block particular file sorts. A perfect method could be so as to add the X-Robots-Tag to an Apache configuration or a .htaccess file.

The X-Robots-Tag may be added to a website’s HTTP responses in an Apache server configuration by way of .htaccess file.

Actual-World Examples And Makes use of Of The X-Robots-Tag

In order that sounds nice in idea, however what does it appear like in the actual world? Let’s have a look.

Let’s say we wished serps to not index .pdf file sorts. This configuration on Apache servers would look one thing just like the under:

<Recordsdata ~ ".pdf$"> Header set X-Robots-Tag "noindex, nofollow" </Recordsdata>

In Nginx, it will appear like the under:

location ~* .pdf$ {

add_header X-Robots-Tag "noindex, nofollow";

}

Now, let’s take a look at a unique state of affairs. Let’s say we wish to use the X-Robots-Tag to dam picture recordsdata, equivalent to .jpg, .gif, .png, and so forth., from being listed. You could possibly do that with an X-Robots-Tag that will appear like the under:

<Recordsdata ~ ".(png|jpe?g|gif)$"> Header set X-Robots-Tag "noindex" </Recordsdata>

Please notice that understanding how these directives work and the impression they’ve on each other is essential.

For instance, what occurs if each the X-Robots-Tag and a meta robots tag are situated when crawler bots uncover a URL?

If that URL is blocked from robots.txt, then sure indexing and serving directives can’t be found and won’t be adopted.

If directives are to be adopted, then the URLs containing these can’t be disallowed from crawling.

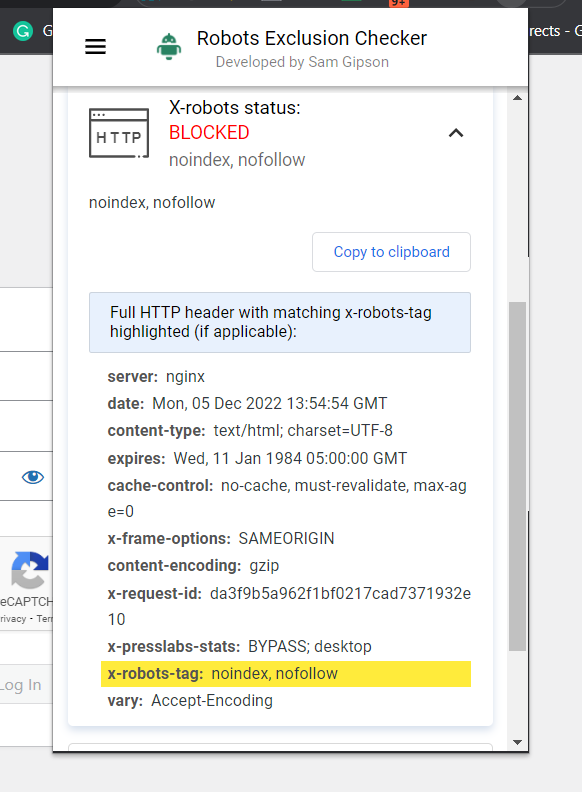

Test For An X-Robots-Tag

There are a number of totally different strategies that can be utilized to examine for an X-Robots-Tag on the positioning.

The best approach to examine is to put in a browser extension that may let you know X-Robots-Tag details about the URL.

Screenshot of Robots Exclusion Checker, December 2022

Screenshot of Robots Exclusion Checker, December 2022One other plugin you need to use to find out whether or not an X-Robots-Tag is getting used, for instance, is the Net Developer plugin.

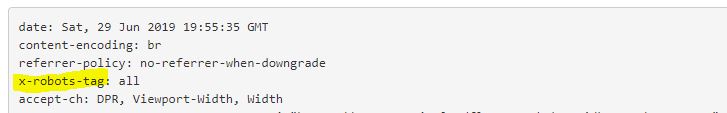

By clicking on the plugin in your browser and navigating to “View Response Headers,” you may see the varied HTTP headers getting used.

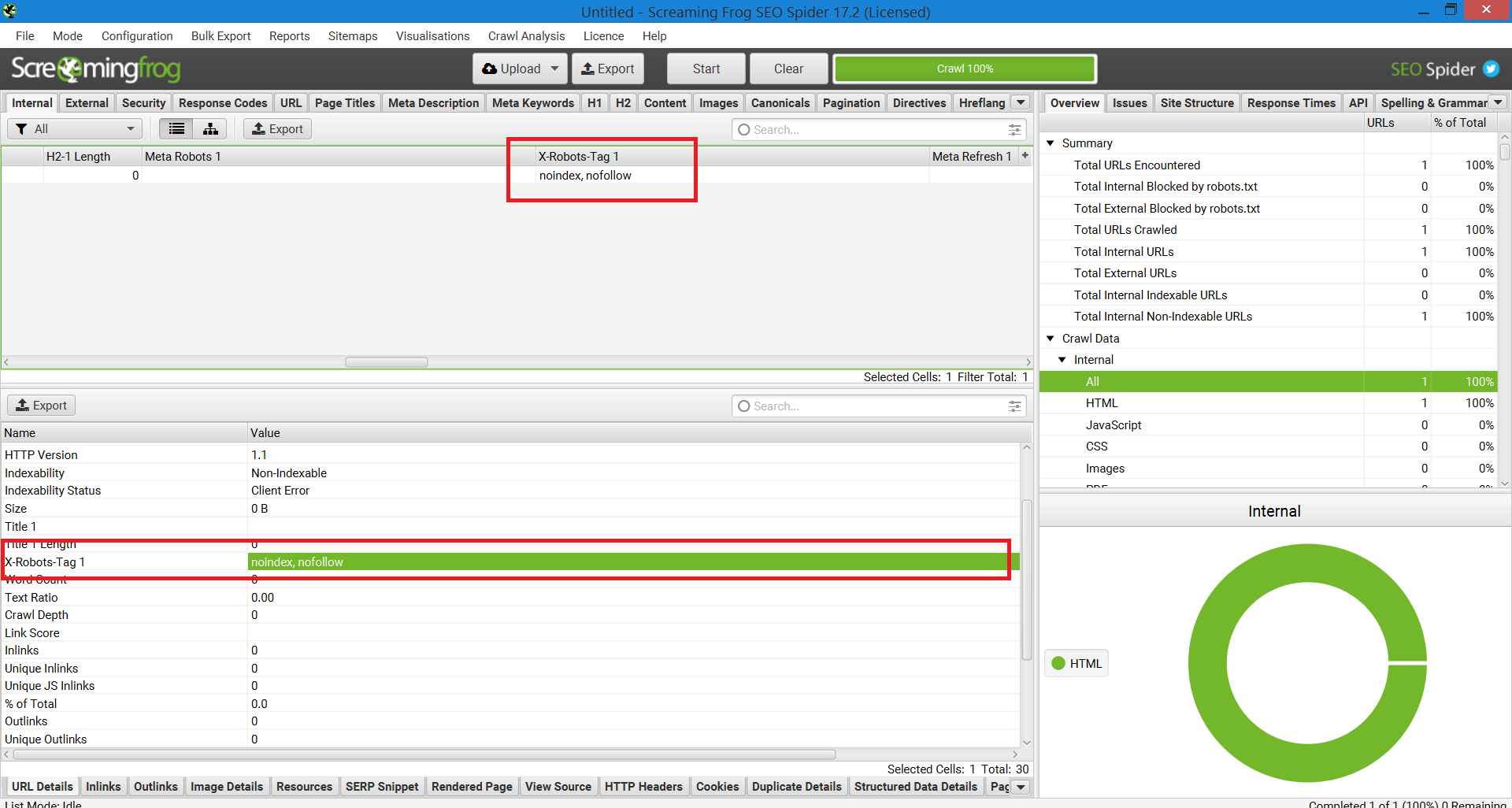

One other technique that can be utilized for scaling as a way to pinpoint points on web sites with 1,000,000 pages is Screaming Frog.

After working a website by means of Screaming Frog, you may navigate to the “X-Robots-Tag” column.

This can present you which of them sections of the positioning are utilizing the tag, together with which particular directives.

Screenshot of Screaming Frog Report. X-Robotic-Tag, December 2022

Screenshot of Screaming Frog Report. X-Robotic-Tag, December 2022Utilizing X-Robots-Tags On Your Web site

Understanding and controlling how serps work together along with your web site is the cornerstone of search engine marketing. And the X-Robots-Tag is a robust software you need to use to do exactly that.

Simply remember: It’s not with out its risks. It is vitally straightforward to make a mistake and deindex your complete website.

That stated, if you happen to’re studying this piece, you’re in all probability not an website positioning newbie. As long as you employ it properly, take your time and examine your work, you’ll discover the X-Robots-Tag to be a helpful addition to your arsenal.

Extra Sources:

Featured Picture: Song_about_summer/Shutterstock