There are a number of infrastructure as code (IaC) frameworks out there at the moment, that will help you outline your infrastructure, such because the AWS Cloud Growth Equipment (AWS CDK) or Terraform by HashiCorp. Terraform, an AWS Accomplice Community (APN) Superior Expertise Accomplice and member of the AWS DevOps Competency, is an IaC device just like AWS CloudFormation that means that you can create, replace, and model your AWS infrastructure. Terraform offers pleasant syntax (just like AWS CloudFormation) together with different options like planning (visibility to see the modifications earlier than they really occur), graphing, and the flexibility to create templates to interrupt infrastructure configurations into smaller chunks, which permits higher upkeep and reusability. We use the capabilities and options of Terraform to construct an API-based ingestion course of into AWS. Let’s get began!

On this publish, we showcase easy methods to construct and orchestrate a Scala Spark software utilizing Amazon EMR Serverless, AWS Step Features, and Terraform. On this end-to-end answer, we run a Spark job on EMR Serverless that processes pattern clickstream knowledge in an Amazon Easy Storage Service (Amazon S3) bucket and shops the aggregation ends in Amazon S3.

With EMR Serverless, you don’t need to configure, optimize, safe, or function clusters to run functions. You’ll proceed to get the advantages of Amazon EMR, comparable to open supply compatibility, concurrency, and optimized runtime efficiency for widespread knowledge frameworks. EMR Serverless is appropriate for purchasers who need ease in working functions utilizing open-source frameworks. It provides fast job startup, automated capability administration, and easy value controls.

Answer overview

We offer the Terraform infrastructure definition and the supply code for an AWS Lambda perform utilizing pattern buyer consumer clicks for on-line web site inputs, that are ingested into an Amazon Kinesis Knowledge Firehose supply stream. The answer makes use of Kinesis Knowledge Firehose to transform the incoming knowledge right into a Parquet file (an open-source file format for Hadoop) earlier than pushing it to Amazon S3 utilizing the AWS Glue Knowledge Catalog. The generated output S3 Parquet file logs are then processed by an EMR Serverless course of, which outputs a report detailing combination clickstream statistics in an S3 bucket. The EMR Serverless operation is triggered utilizing Step Features. The pattern structure and code are spun up as proven within the following diagram.

The supplied samples have the supply code for constructing the infrastructure utilizing Terraform for working the Amazon EMR software. Setup scripts are supplied to create the pattern ingestion utilizing Lambda for the incoming software logs. For the same ingestion sample pattern, confer with Provision AWS infrastructure utilizing Terraform (By HashiCorp): an instance of net software logging buyer knowledge.

The next are the high-level steps and AWS providers used on this answer:

- The supplied software code is packaged and constructed utilizing Apache Maven.

- Terraform instructions are used to deploy the infrastructure in AWS.

- The EMR Serverless software offers the choice to submit a Spark job.

- The answer makes use of two Lambda features:

- Ingestion – This perform processes the incoming request and pushes the information into the Kinesis Knowledge Firehose supply stream.

- EMR Begin Job – This perform begins the EMR Serverless software. The EMR job course of converts the ingested consumer click on logs into output in one other S3 bucket.

- Step Features triggers the EMR Begin Job Lambda perform, which submits the applying to EMR Serverless for processing of the ingested log recordsdata.

- The answer makes use of 4 S3 buckets:

- Kinesis Knowledge Firehose supply bucket – Shops the ingested software logs in Parquet file format.

- Loggregator supply bucket – Shops the Scala code and JAR for working the EMR job.

- Loggregator output bucket – Shops the EMR processed output.

- EMR Serverless logs bucket – Shops the EMR course of software logs.

- Pattern invoke instructions (run as a part of the preliminary setup course of) insert the information utilizing the ingestion Lambda perform. The Kinesis Knowledge Firehose supply stream converts the incoming stream right into a Parquet file and shops it in an S3 bucket.

For this answer, we made the next design choices:

- We use Step Features and Lambda on this use case to set off the EMR Serverless software. In a real-world use case, the information processing software may very well be lengthy working and will exceed Lambda’s timeout limits. On this case, you should utilize instruments like Amazon Managed Workflows for Apache Airflow (Amazon MWAA). Amazon MWAA is a managed orchestration service makes it simpler to arrange and function end-to-end knowledge pipelines within the cloud at scale.

- The Lambda code and EMR Serverless log aggregation code are developed utilizing Java and Scala, respectively. You need to use any supported languages in these use circumstances.

- The AWS Command Line Interface (AWS CLI) V2 is required for querying EMR Serverless functions from the command line. You too can view these from the AWS Administration Console. We offer a pattern AWS CLI command to check the answer later on this publish.

Stipulations

To make use of this answer, you could full the next stipulations:

- Set up the AWS CLI. For this publish, we used model 2.7.18. That is required to be able to question the

aws emr-serverlessAWS CLI instructions out of your native machine. Optionally, all of the AWS providers used on this publish may be seen and operated through the console. - Be sure to have Java put in, and JDK/JRE 8 is about within the atmosphere path of your machine. For directions, see the Java Growth Equipment.

- Set up Apache Maven. The Java Lambda features are constructed utilizing mvn packages and are deployed utilizing Terraform into AWS.

- Set up the Scala Construct Device. For this publish, we used model 1.4.7. Be sure to obtain and set up based mostly in your working system wants.

- Arrange Terraform. For steps, see Terraform downloads. We use model 1.2.5 for this publish.

- Have an AWS account.

Configure the answer

To spin up the infrastructure and the applying, full the next steps:

- Clone the next GitHub repository.

The suppliedexec.shshell script builds the Java software JAR (for the Lambda ingestion perform) and the Scala software JAR (for the EMR processing) and deploys the AWS infrastructure that’s wanted for this use case. - Run the next instructions:

To run the instructions individually, set the applying deployment Area and account quantity, as proven within the following instance:

The next is the Maven construct Lambda software JAR and Scala software bundle:

- Deploy the AWS infrastructure utilizing Terraform:

Take a look at the answer

After you construct and deploy the applying, you may insert pattern knowledge for Amazon EMR processing. We use the next code for instance. The exec.sh script has a number of pattern insertions for Lambda. The ingested logs are utilized by the EMR Serverless software job.

The pattern AWS CLI invoke command inserts pattern knowledge for the applying logs:

To validate the deployments, full the next steps:

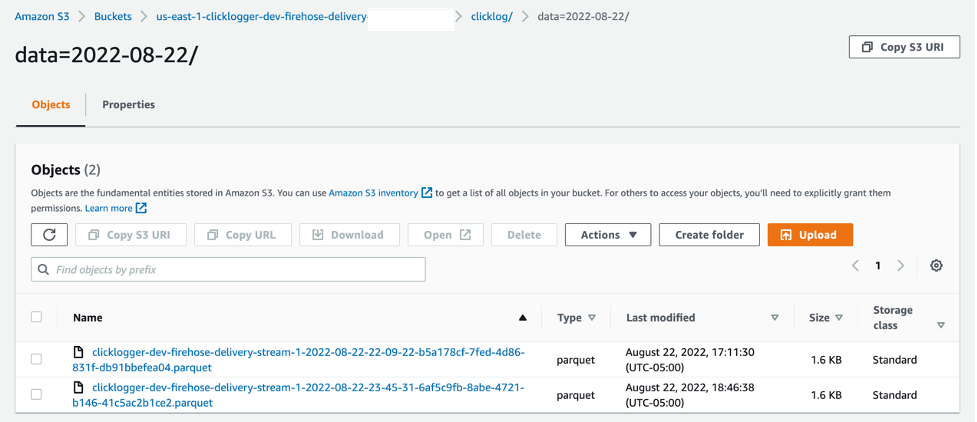

- On the Amazon S3 console, navigate to the bucket created as a part of the infrastructure setup.

- Select the bucket to view the recordsdata.

It’s best to see that knowledge from the ingested stream was transformed right into a Parquet file. - Select the file to view the information.

The next screenshot reveals an instance of our bucket contents.

Now you may run Step Features to validate the EMR Serverless software. - On the Step Features console, open

clicklogger-dev-state-machine.

The state machine reveals the steps to run that set off the Lambda perform and EMR Serverless software, as proven within the following diagram.

- Run the state machine.

- After the state machine runs efficiently, navigate to the

clicklogger-dev-output-bucket on the Amazon S3 console to see the output recordsdata.

- Use the AWS CLI to verify the deployed EMR Serverless software:

- On the Amazon EMR console, select Serverless within the navigation pane.

- Choose

clicklogger-dev-studioand select Handle functions. - The Utility created by the stack will probably be as proven under

clicklogger-dev-loggregator-emr-<Your-Account-Quantity>

Now you may evaluate the EMR Serverless software output.

Now you may evaluate the EMR Serverless software output. - On the Amazon S3 console, open the output bucket (

us-east-1-clicklogger-dev-loggregator-output-).

The EMR Serverless software writes the output based mostly on the date partition, comparable to2022/07/28/response.md.The next code reveals an instance of the file output:

Clear up

The supplied ./cleanup.sh script has the required steps to delete all of the recordsdata from the S3 buckets that had been created as a part of this publish. The terraform destroy command cleans up the AWS infrastructure that you just created earlier. See the next code:

To do the steps manually, you too can delete the sources through the AWS CLI:

Conclusion

On this publish, we constructed, deployed, and ran a knowledge processing Spark job in EMR Serverless that interacts with numerous AWS providers. We walked by way of deploying a Lambda perform packaged with Java utilizing Maven, and a Scala software code for the EMR Serverless software triggered with Step Features with infrastructure as code. You need to use any mixture of relevant programming languages to construct your Lambda features and EMR job software. EMR Serverless may be triggered manually, automated, or orchestrated utilizing AWS providers like Step Features and Amazon MWAA.

We encourage you to check this instance and see for your self how this total software design works inside AWS. Then, it’s simply the matter of changing your particular person code base, packaging it, and letting EMR Serverless deal with the method effectively.

If you happen to implement this instance and run into any points, or have any questions or suggestions about this publish, please depart a remark!

References

In regards to the Authors

Sivasubramanian Ramani (Siva Ramani) is a Sr Cloud Utility Architect at Amazon Net Providers. His experience is in software optimization & modernization, serverless options and utilizing Microsoft software workloads with AWS.

Sivasubramanian Ramani (Siva Ramani) is a Sr Cloud Utility Architect at Amazon Net Providers. His experience is in software optimization & modernization, serverless options and utilizing Microsoft software workloads with AWS.

Naveen Balaraman is a Sr Cloud Utility Architect at Amazon Net Providers. He’s captivated with Containers, serverless Purposes, Architecting Microservices and serving to clients leverage the ability of AWS cloud.

Naveen Balaraman is a Sr Cloud Utility Architect at Amazon Net Providers. He’s captivated with Containers, serverless Purposes, Architecting Microservices and serving to clients leverage the ability of AWS cloud.