Did a human write that, or ChatGPT? It may be exhausting to inform — maybe too exhausting, its creator OpenAI thinks, which is why it’s engaged on a solution to “watermark” AI-generated content material.

In a lecture on the College of Austin, pc science professor Scott Aaronson, at the moment a visitor researcher at OpenAI, revealed that OpenAI is growing a device for “statistically watermarking the outputs of a textual content [AI system].” Every time a system — say, ChatGPT — generates textual content, the device would embed an “unnoticeable secret sign” indicating the place the textual content got here from.

OpenAI engineer Hendrik Kirchner constructed a working prototype, Aaronson says, and the hope is to construct it into future OpenAI-developed programs.

“We would like it to be a lot tougher to take [an AI system’s] output and go it off as if it got here from a human,” Aaronson stated in his remarks. “This could possibly be useful for stopping educational plagiarism, clearly, but in addition, for instance, mass era of propaganda — you recognize, spamming each weblog with seemingly on-topic feedback supporting Russia’s invasion of Ukraine with out even a constructing stuffed with trolls in Moscow. Or impersonating somebody’s writing type as a way to incriminate them.”

Exploiting randomness

Why the necessity for a watermark? ChatGPT is a powerful instance. The chatbot developed by OpenAI has taken the web by storm, exhibiting a flair not just for answering difficult questions however writing poetry, fixing programming puzzles and waxing poetic on any variety of philosophical matters.

Whereas ChatGPT is very amusing — and genuinely helpful — the system raises apparent moral considerations. Like most of the text-generating programs earlier than it, ChatGPT could possibly be used to jot down high-quality phishing emails and dangerous malware, or cheat at college assignments. And as a question-answering device, it’s factually inconsistent — a shortcoming that led programming Q&A website Stack Overflow to ban solutions originating from ChatGPT till additional discover.

To know the technical underpinnings of OpenAI’s watermarking device, it’s useful to know why programs like ChatGPT work in addition to they do. These programs perceive enter and output textual content as strings of “tokens,” which might be phrases but in addition punctuation marks and elements of phrases. At their cores, the programs are continually producing a mathematical operate referred to as a likelihood distribution to determine the following token (e.g., phrase) to output, considering all previously-outputted tokens.

Within the case of OpenAI-hosted programs like ChatGPT, after the distribution is generated, OpenAI’s server does the job of sampling tokens based on the distribution. There’s some randomness on this choice; that’s why the identical textual content immediate can yield a special response.

OpenAI’s watermarking device acts like a “wrapper” over current text-generating programs, Aaronson stated through the lecture, leveraging a cryptographic operate working on the server degree to “pseudorandomly” choose the following token. In concept, textual content generated by the system would nonetheless look random to you or I, however anybody possessing the “key” to the cryptographic operate would be capable to uncover a watermark.

“Empirically, just a few hundred tokens appear to be sufficient to get an inexpensive sign that sure, this textual content got here from [an AI system]. In precept, you may even take a protracted textual content and isolate which elements most likely got here from [the system] and which elements most likely didn’t.” Aaronson stated. “[The tool] can do the watermarking utilizing a secret key and it may possibly test for the watermark utilizing the identical key.”

Key limitations

Watermarking AI-generated textual content isn’t a brand new concept. Earlier makes an attempt, most rules-based, have relied on strategies like synonym substitutions and syntax-specific phrase adjustments. However exterior of theoretical analysis revealed by the German institute CISPA final March, OpenAI’s seems to be one of many first cryptography-based approaches to the issue.

When contacted for remark, Aaronson declined to disclose extra concerning the watermarking prototype, save that he expects to co-author a analysis paper within the coming months. OpenAI additionally declined, saying solely that watermarking is amongst a number of “provenance strategies” it’s exploring to detect outputs generated by AI.

Unaffiliated lecturers and trade specialists, nonetheless, shared combined opinions. They word that the device is server-side, that means it wouldn’t essentially work with all text-generating programs. And so they argue that it’d be trivial for adversaries to work round.

“I feel it could be pretty simple to get round it by rewording, utilizing synonyms, and so on.,” Srini Devadas, a pc science professor at MIT, advised TechCrunch through electronic mail. “It is a little bit of a tug of warfare.”

Jack Hessel, a analysis scientist on the Allen Institute for AI, identified that it’d be tough to imperceptibly fingerprint AI-generated textual content as a result of every token is a discrete alternative. Too apparent a fingerprint would possibly end in odd phrases being chosen that degrade fluency, whereas too refined would depart room for doubt when the fingerprint is sought out.

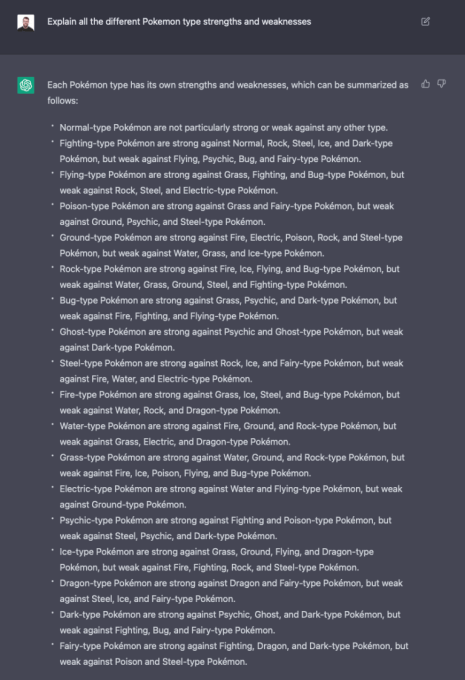

ChatGPT answering a query.

Yoav Shoham, the co-founder and co-CEO of AI21 Labs, an OpenAI rival, doesn’t suppose that statistical watermarking can be sufficient to assist establish the supply of AI-generated textual content. He requires a “extra complete” strategy that features differential watermarking, by which totally different elements of textual content are watermarked otherwise, and AI programs that extra precisely cite the sources of factual textual content.

This particular watermarking method additionally requires putting a variety of belief — and energy — in OpenAI, specialists famous.

“A perfect fingerprinting wouldn’t be discernable by a human reader and allow extremely assured detection,” Hessel stated through electronic mail. “Relying on the way it’s arrange, it could possibly be that OpenAI themselves could be the one occasion capable of confidently present that detection due to how the ‘signing’ course of works.”

In his lecture, Aaronson acknowledged the scheme would solely actually work in a world the place corporations like OpenAI are forward in scaling up state-of-the-art programs — they usually all conform to be accountable gamers. Even when OpenAI had been to share the watermarking device with different text-generating system suppliers, like Cohere and AI21Labs, this wouldn’t forestall others from selecting to not use it.

“If [it] turns into a free-for-all, then a variety of the protection measures do develop into tougher, and would possibly even be unattainable, at the least with out authorities regulation,” Aaronson stated. “In a world the place anybody might construct their very own textual content mannequin that was simply pretty much as good as [ChatGPT, for example] … what would you do there?”

That’s the way it’s performed out within the text-to-image area. Not like OpenAI, whose DALL-E 2 image-generating system is simply out there by an API, Stability AI open-sourced its text-to-image tech (referred to as Steady Diffusion). Whereas DALL-E 2 has various filters on the API degree to stop problematic pictures from being generated (plus watermarks on pictures it generates), the open supply Steady Diffusion doesn’t. Dangerous actors have used it to create deepfaked porn, amongst different toxicity.

For his half, Aaronson is optimistic. Within the lecture, he expressed the assumption that, if OpenAI can show that watermarking works and doesn’t impression the standard of the generated textual content, it has the potential to develop into an trade normal.

Not everybody agrees. As Devadas factors out, the device wants a key, that means it may possibly’t be utterly open supply — probably limiting its adoption to organizations that conform to companion with OpenAI. (If the important thing had been to be made public, anybody might deduce the sample behind the watermarks, defeating their function.)

But it surely may not be so far-fetched. A consultant for Quora stated the corporate can be considering utilizing such a system, and it possible wouldn’t be the one one.

“You would fear that every one these things about making an attempt to be secure and accountable when scaling AI … as quickly because it significantly hurts the underside traces of Google and Meta and Alibaba and the opposite main gamers, a variety of it is going to exit the window,” Aaronson stated. “Alternatively, we’ve seen over the previous 30 years that the massive Web corporations can agree on sure minimal requirements, whether or not due to concern of getting sued, want to be seen as a accountable participant, or no matter else.”