Sooner or later, we think about that groups of robots will discover and develop the floor of close by planets, moons and asteroids – taking samples, constructing buildings, deploying devices. A whole bunch of vivid analysis minds are busy designing such robots. We’re occupied with one other query: present the astronauts the instruments to effectively function their robotic groups on the planetary floor, in a approach that doesn’t frustrate or exhaust them?

Acquired knowledge says that extra automation is all the time higher. In any case, with automation, the job often will get finished sooner, and the extra duties (or sub-tasks) robots can do on their very own, the much less the workload on the operator. Think about a robotic constructing a construction or establishing a telescope array, planning and executing duties by itself, much like a “manufacturing facility of the longer term”, with solely sporadic enter from an astronaut supervisor orbiting in a spaceship. That is one thing we examined within the ISS experiment SUPVIS Justin in 2017-18, with astronauts on board the ISS commanding DLR Robotic and Mechatronic Heart’s humanoid robotic, Rollin’ Justin, in Supervised Autonomy.

Nevertheless, the unstructured surroundings and harsh lighting on planetary surfaces makes issues tough for even the most effective object-detection algorithms. And what occurs when issues go fallacious, or a activity must be finished that was not foreseen by the robotic programmers? In a manufacturing facility on Earth, the supervisor may go all the way down to the store ground to set issues proper – an costly and harmful journey in case you are an astronaut!

The following neatest thing is to function the robotic as an avatar of your self on the planet floor – seeing what it sees, feeling what it feels. Immersing your self within the robotic’s surroundings, you may command the robotic to do precisely what you need – topic to its bodily capabilities.

House Experiments

In 2019, we examined this in our subsequent ISS experiment, ANALOG-1, with the Work together Rover from ESA’s Human Robotic Interplay Lab. That is an all-wheel-drive platform with two robotic arms, each outfitted with cameras and one fitted with a gripper and force-torque sensor, in addition to quite a few different sensors.

On a laptop computer display on the ISS, the astronaut – Luca Parmitano – noticed the views from the robotic’s cameras, and will transfer one digicam and drive the platform with a custom-built joystick. The manipulator arm was managed with the sigma.7 force-feedback machine: the astronaut strapped his hand to it, and will transfer the robotic arm and open its gripper by shifting and opening his personal hand. He might additionally really feel the forces from touching the bottom or the rock samples – essential to assist him perceive the state of affairs, because the low bandwidth to the ISS restricted the standard of the video feed.

There have been different challenges. Over such massive distances, delays of as much as a second are typical, which imply that conventional teleoperation with force-feedback might need turn into unstable. Moreover, the time delay the robotic between making contact with the surroundings and the astronaut feeling it will probably result in harmful motions which might injury the robotic.

To assist with this we developed a management methodology: the Time Area Passivity Method for Excessive Delays (TDPA-HD). It displays the quantity of power that the operator places in (i.e. pressure multiplied by velocity built-in over time), and sends that worth together with the speed command. On the robotic facet, it measures the pressure that the robotic is exerting, and reduces the speed in order that it doesn’t switch extra power to the surroundings than the operator put in.

On the human’s facet, it reduces the force-feedback to the operator in order that no extra power is transferred to the operator than is measured from the surroundings. Because of this the system stays secure, but in addition that the operator by no means unintentionally instructions the robotic to exert extra pressure on the surroundings than they intend to – holding each operator and robotic secure.

This was the primary time that an astronaut had teleoperated a robotic from house whereas feeling force-feedback in all six levels of freedom (three rotational, three translational). The astronaut did all of the sampling duties assigned to him – whereas we might collect invaluable information to validate our methodology, and publish it in Science Robotics. We additionally reported our findings on the astronaut’s expertise.

Some issues had been nonetheless missing. The experiment was carried out in a hangar on an outdated Dutch air base – probably not consultant of a planet floor.

Additionally, the astronaut requested if the robotic might do extra by itself – in distinction to SUPVIS Justin, when the astronauts typically discovered the Supervised Autonomy interface limiting and wished for extra immersion. What if the operator might select the extent of robotic autonomy applicable to the duty?

Scalable Autonomy

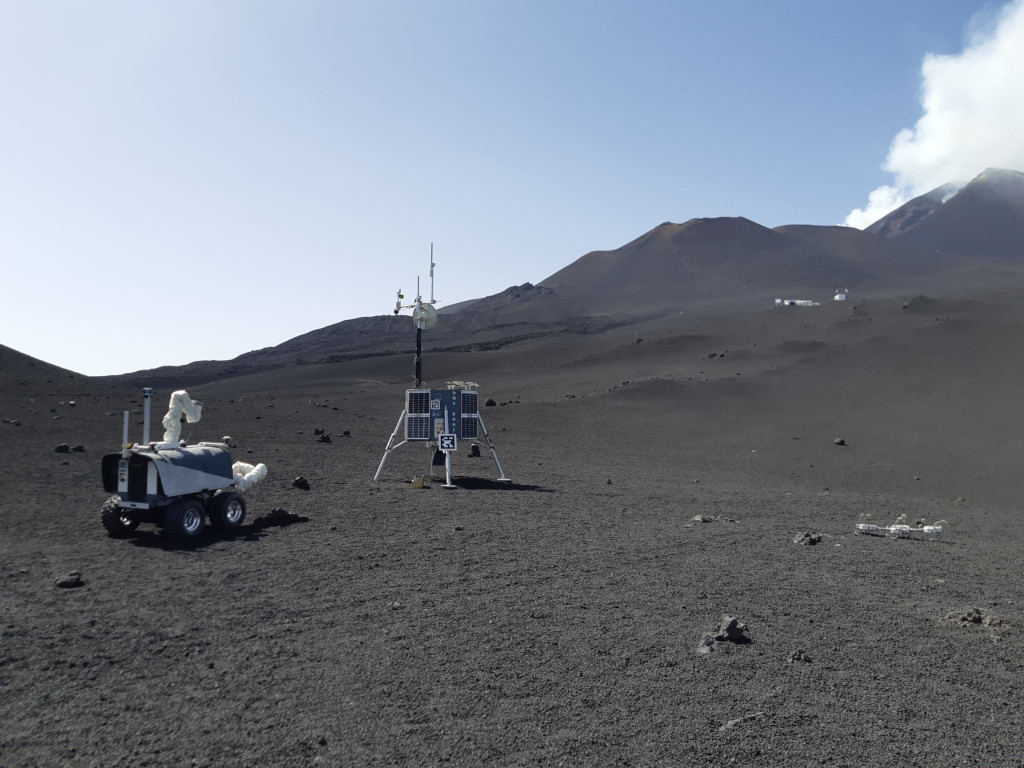

In June and July 2022, we joined the DLR’s ARCHES experiment marketing campaign on Mt. Etna. The robotic – on a lava subject 2,700 metres above sea stage – was managed by former astronaut Thomas Reiter from the management room within the close by city of Catania. Wanting via the robotic’s cameras, it wasn’t an amazing leap of the creativeness to think about your self on one other planet – save for the occasional bumblebee or group of vacationers.

This was our first enterprise into “Scalable Autonomy” – permitting the astronaut to scale up or down the robotic’s autonomy, in keeping with the duty. In 2019, Luca might solely see via the robotic’s cameras and drive with a joystick, this time Thomas Reiter had an interactive map, on which he might place markers for the robotic to routinely drive to. In 2019, the astronaut might management the robotic arm with pressure suggestions; he might now additionally routinely detect and accumulate rocks with assist from a Masks R-CNN (region-based convolutional neural community).

We discovered so much from testing our system in a sensible surroundings. Not least, that the idea that extra automation means a decrease astronaut workload just isn’t all the time true. Whereas the astronaut used the automated rock-picking so much, he warmed much less to the automated navigation – indicating that it was extra effort than driving with the joystick. We suspect that much more elements come into play, together with how a lot the astronaut trusts the automated system, how effectively it really works, and the suggestions that the astronaut will get from it on display – to not point out the delay. The longer the delay, the harder it’s to create an immersive expertise (consider on-line video video games with a number of lag) and subsequently the extra engaging autonomy turns into.

What are the subsequent steps? We wish to check a really scalable-autonomy, multi-robot situation. We’re working in direction of this within the mission Floor Avatar – in a large-scale Mars-analog surroundings, astronauts on the ISS will command a group of 4 robots on floor. After two preliminary exams with astronauts Samantha Christoforetti and Jessica Watkins in 2022, the primary massive experiment is deliberate for 2023.

Right here the technical challenges are totally different. Past the formidable engineering problem of getting 4 robots to work along with a shared understanding of their world, we additionally should attempt to predict which duties can be simpler for the astronaut with which stage of autonomy, when and the way she might scale the autonomy up or down, and combine this all into one, intuitive consumer interface.

The insights we hope to achieve from this may be helpful not just for house exploration, however for any operator commanding a group of robots at a distance – for upkeep of photo voltaic or wind power parks, for instance, or search and rescue missions. An area experiment of this type and scale can be our most complicated ISS telerobotic mission but – however we’re trying ahead to this thrilling problem forward.

tags: c-House

Aaron Pereira

is a researcher on the German Aerospace Centre (DLR) and a visitor researcher at ESA’s Human Robotic Interplay Lab.

Aaron Pereira

is a researcher on the German Aerospace Centre (DLR) and a visitor researcher at ESA’s Human Robotic Interplay Lab.

Neal Y. Lii

is the area head of House Robotic Help, and the co-founding head of the Modular Dexterous (Modex) Robotics Laboratory on the German Aerospace Heart (DLR).

Neal Y. Lii

is the area head of House Robotic Help, and the co-founding head of the Modular Dexterous (Modex) Robotics Laboratory on the German Aerospace Heart (DLR).

Thomas Krueger

is head of the Human Robotic Interplay Lab at ESA.