Hashing is a core operation in most on-line databases, like a library catalogue or an e-commerce web site. A hash perform generates codes that exchange information inputs. Since these codes are shorter than the precise information, and normally a set size, this makes it simpler to search out and retrieve the unique info.

Nevertheless, as a result of conventional hash capabilities generate codes randomly, typically two items of information might be hashed with the identical worth. This causes collisions — when trying to find one merchandise factors a person to many items of information with the identical hash worth. It takes for much longer to search out the fitting one, leading to slower searches and diminished efficiency.

Sure varieties of hash capabilities, generally known as good hash capabilities, are designed to type information in a manner that stops collisions. However they should be specifically constructed for every dataset and take extra time to compute than conventional hash capabilities.

Since hashing is utilized in so many functions, from database indexing to information compression to cryptography, quick and environment friendly hash capabilities are crucial. So, researchers from MIT and elsewhere got down to see if they may use machine studying to construct higher hash capabilities.

They discovered that, in sure conditions, utilizing discovered fashions as an alternative of conventional hash capabilities may end in half as many collisions. Realized fashions are these which have been created by working a machine-learning algorithm on a dataset. Their experiments additionally confirmed that discovered fashions had been usually extra computationally environment friendly than good hash capabilities.

“What we discovered on this work is that in some conditions we will give you a greater tradeoff between the computation of the hash perform and the collisions we are going to face. We are able to improve the computational time for the hash perform a bit, however on the identical time we will cut back collisions very considerably in sure conditions,” says Ibrahim Sabek, a postdoc within the MIT Information Methods Group of the Laptop Science and Synthetic Intelligence Laboratory (CSAIL).

Their analysis, which might be offered on the Worldwide Convention on Very Massive Databases, demonstrates how a hash perform might be designed to considerably velocity up searches in an enormous database. As an illustration, their method may speed up computational methods that scientists use to retailer and analyze DNA, amino acid sequences, or different organic info.

Sabek is co-lead creator of the paper with electrical engineering and pc science (EECS) graduate pupil Kapil Vaidya. They’re joined by co-authors Dominick Horn, a graduate pupil on the Technical College of Munich; Andreas Kipf, an MIT postdoc; Michael Mitzenmacher, professor of pc science on the Harvard John A. Paulson College of Engineering and Utilized Sciences; and senior creator Tim Kraska, affiliate professor of EECS at MIT and co-director of the Information Methods and AI Lab.

Hashing it out

Given a knowledge enter, or key, a conventional hash perform generates a random quantity, or code, that corresponds to the slot the place that key might be saved. To make use of a easy instance, if there are 10 keys to be put into 10 slots, the perform would generate a random integer between 1 and 10 for every enter. It’s extremely possible that two keys will find yourself in the identical slot, inflicting collisions.

Good hash capabilities present a collision-free different. Researchers give the perform some further information, such because the variety of slots the information are to be positioned into. Then it could carry out further computations to determine the place to place every key to keep away from collisions. Nevertheless, these added computations make the perform more durable to create and fewer environment friendly.

“We had been questioning, if we all know extra in regards to the information — that it’ll come from a selected distribution — can we use discovered fashions to construct a hash perform that may truly cut back collisions?” Vaidya says.

An information distribution exhibits all attainable values in a dataset, and the way usually every worth happens. The distribution can be utilized to calculate the chance {that a} explicit worth is in a knowledge pattern.

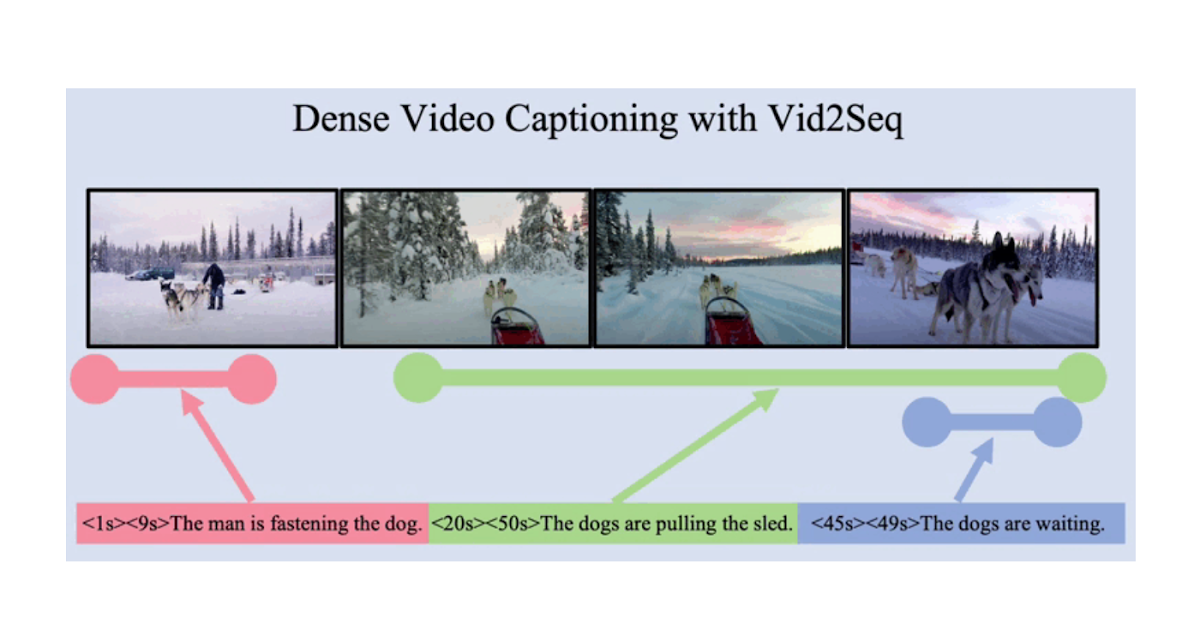

The researchers took a small pattern from a dataset and used machine studying to approximate the form of the information’s distribution, or how the information are unfold out. The discovered mannequin then makes use of the approximation to foretell the placement of a key within the dataset.

They discovered that discovered fashions had been simpler to construct and quicker to run than good hash capabilities and that they led to fewer collisions than conventional hash capabilities if information are distributed in a predictable manner. But when the information will not be predictably distributed, as a result of gaps between information factors differ too broadly, utilizing discovered fashions may trigger extra collisions.

“We could have an enormous variety of information inputs, and every one has a distinct hole between it and the following one, so studying that’s fairly tough,” Sabek explains.

Fewer collisions, quicker outcomes

When information had been predictably distributed, discovered fashions may cut back the ratio of colliding keys in a dataset from 30 % to fifteen %, in contrast with conventional hash capabilities. They had been additionally capable of obtain higher throughput than good hash capabilities. In one of the best instances, discovered fashions diminished the runtime by almost 30 %.

As they explored using discovered fashions for hashing, the researchers additionally discovered that all through was impacted most by the variety of sub-models. Every discovered mannequin consists of smaller linear fashions that approximate the information distribution. With extra sub-models, the discovered mannequin produces a extra correct approximation, but it surely takes extra time.

“At a sure threshold of sub-models, you get sufficient info to construct the approximation that you simply want for the hash perform. However after that, it gained’t result in extra enchancment in collision discount,” Sabek says.

Constructing off this evaluation, the researchers need to use discovered fashions to design hash capabilities for different varieties of information. In addition they plan to discover discovered hashing for databases by which information might be inserted or deleted. When information are up to date on this manner, the mannequin wants to alter accordingly, however altering the mannequin whereas sustaining accuracy is a tough drawback.

“We need to encourage the group to make use of machine studying inside extra basic information constructions and operations. Any sort of core information construction presents us with a possibility use machine studying to seize information properties and get higher efficiency. There may be nonetheless so much we will discover,” Sabek says.

This work was supported, partly, by Google, Intel, Microsoft, the Nationwide Science Basis, america Air Power Analysis Laboratory, and america Air Power Synthetic Intelligence Accelerator.