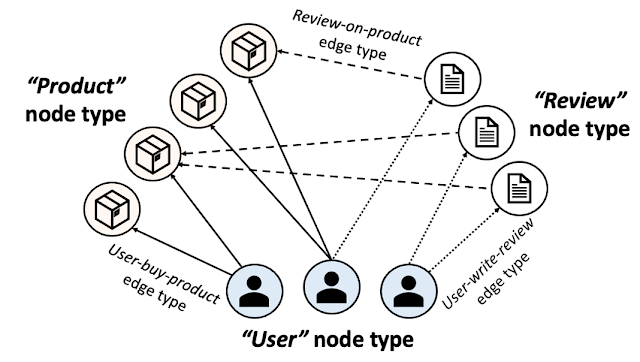

Industrial purposes of machine studying are generally composed of assorted gadgets which have differing knowledge modalities or function distributions. Heterogeneous graphs (HGs) provide a unified view of those multimodal knowledge methods by defining a number of varieties of nodes (for every knowledge kind) and edges (for the relation between knowledge gadgets). For example, e-commerce networks might need [user, product, review] nodes or video platforms might need [channel, user, video, comment] nodes. Heterogeneous graph neural networks (HGNNs) be taught node embeddings summarizing every node’s relationships right into a vector. Nonetheless, in actual world HGs, there may be typically a label imbalance concern between totally different node varieties. Because of this label-scarce node varieties can’t exploit HGNNs, which hampers the broader applicability of HGNNs.

In “Zero-shot Switch Studying inside a Heterogeneous Graph through Information Switch Networks”, introduced at NeurIPS 2022, we suggest a mannequin referred to as a Information Switch Community (KTN), which transfers information from label-abundant node varieties to zero-labeled node varieties utilizing the wealthy relational data given in a HG. We describe how we pre-train a HGNN mannequin with out the necessity for fine-tuning. KTNs outperform state-of-the-art switch studying baselines by as much as 140% on zero-shot studying duties, and can be utilized to enhance many present HGNN fashions on these duties by 24% (or extra).

|

| KTNs rework labels from one kind of data (squares) by a graph to a different kind (stars). |

What’s a heterogeneous graph?

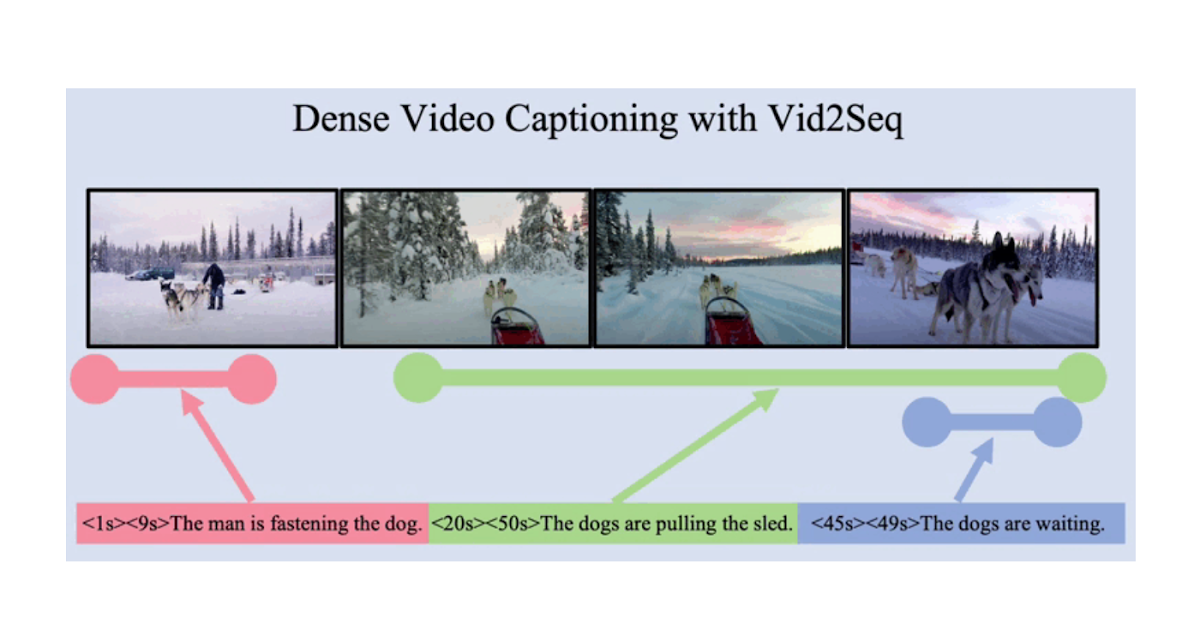

A HG consists of a number of node and edge varieties. The determine under reveals an e-commerce community introduced as a HG. In e-commerce, “customers” buy “merchandise” and write “evaluations”. A HG presents this ecosystem utilizing three node varieties [user, product, review] and three edge varieties [user-buy-product, user-write-review, review-on-product]. Particular person merchandise, customers, and evaluations are then introduced as nodes and their relationships as edges within the HG with the corresponding node and edge varieties.

|

| E-commerce heterogeneous graph. |

Along with all connectivity data, HGs are generally given with enter node attributes that summarize every node’s data. Enter node attributes may have totally different modalities throughout totally different node varieties. For example, photos of merchandise might be given as enter node attributes for the product nodes, whereas textual content may be given as enter attributes to evaluate nodes. Node labels (e.g., the class of every product or the class that the majority pursuits every consumer) are what we wish to predict on every node.

HGNNs and label shortage points

HGNNs compute node embeddings that summarize every node’s native constructions (together with the node and its neighbor’s data). These node embeddings are utilized by a classifier to foretell every node’s label. To coach a HGNN mannequin and a classifier to foretell labels for a particular node kind, we require a great quantity of labels for the kind.

A typical concern in industrial purposes of deep studying is label shortage, and with their numerous node varieties, HGNNs are much more more likely to face this problem. For example, publicly obtainable content material node varieties (e.g., product nodes) are abundantly labeled, whereas labels for consumer or account nodes will not be obtainable resulting from privateness restrictions. Because of this in most traditional coaching settings, HGNN fashions can solely be taught to make good inferences for a number of label-abundant node varieties and may normally not make any inferences for any remaining node varieties (given the absence of any labels for them).

Switch studying on heterogeneous graphs

Zero-shot switch studying is a method used to enhance the efficiency of a mannequin on a goal area with no labels by utilizing the information discovered by the mannequin from one other associated supply area with adequately labeled knowledge. To use switch studying to unravel this label shortage concern for sure node varieties in HGs, the goal area can be the zero-labeled node varieties. Then what can be the supply area? Earlier work generally units the supply area as the identical kind of nodes positioned in a unique HG, assuming these nodes are abundantly labeled. This graph-to-graph switch studying method pre-trains a HGNN mannequin on the exterior HG after which runs the mannequin on the unique (label-scarce) HG.

Nonetheless, these approaches are usually not relevant in lots of real-world eventualities for 3 causes. First, any exterior HG that might be utilized in a graph-to-graph switch studying setting would nearly certainly be proprietary, thus, doubtless unavailable. Second, even when practitioners may receive entry to an exterior HG, it’s unlikely the distribution of that supply HG would match their goal HG effectively sufficient to use switch studying. Lastly, node varieties affected by label shortage are more likely to endure the identical concern on different HGs (e.g., privateness points on consumer nodes).

Our method: Switch studying between node varieties inside a heterogeneous graph

Right here, we make clear a extra sensible supply area, different node varieties with ample labels positioned on the identical HG. As an alternative of utilizing additional HGs, we switch information inside a single HG (assumed to be totally owned by the practitioners) throughout various kinds of nodes. Extra particularly, we pre-train a HGNN mannequin and a classifier on a label-abundant (supply) node kind, then reuse the fashions on the zero-labeled (goal) node varieties positioned in the identical HG with out extra fine-tuning. The one requirement is that the supply and goal node varieties share the identical label set (e.g., within the e-commerce HG, product nodes have a label set describing product classes, and consumer nodes share the identical label set describing their favourite purchasing classes).

Why is it difficult?

Sadly, we can’t immediately reuse the pre-trained HGNN and classifier on the goal node kind. One essential attribute of HGNN architectures is that they’re composed of modules specialised to every node kind to completely be taught the multiplicity of HGs. HGNNs use distinct units of modules to compute embeddings for every node kind. Within the determine under, blue- and red-colored modules are used to compute node embeddings for the supply and goal node varieties, respectively.

|

| HGNNs are composed of modules specialised to every node kind and use distinct units of modules to compute embeddings of various node varieties. Extra particulars may be discovered within the paper. |

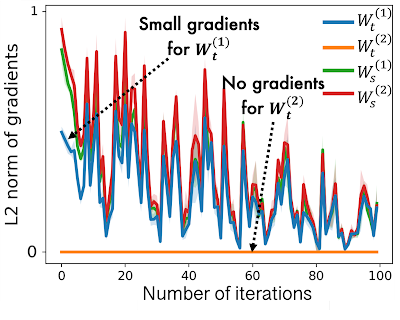

Whereas pre-training HGNNs on the supply node kind, source-specific modules within the HGNNs are effectively skilled, nevertheless target-specific modules are under-trained as they’ve solely a small quantity of gradients flowing into them. That is proven under, the place we see that the L2 norm of gradients for goal node varieties (i.e., Mtt) are a lot decrease than for supply varieties (i.e., Mss). On this case a HGNN mannequin outputs poor node embeddings for the goal node kind, which leads to poor process efficiency.

|

|

| In HGNNs, goal type-specific modules obtain zero or solely a small quantity of gradients throughout pre-training on the supply node kind, resulting in poor efficiency on the goal node kind. |

KTN: Trainable cross-type switch studying for HGNNs

Our work focuses on remodeling the (poor) goal node embeddings computed by a pre-trained HGNN mannequin to observe the distribution of the supply node embeddings. Then the classifier, pre-trained on the supply node kind, may be reused for the goal node kind. How can we map the goal node embeddings to the supply area? To reply this query, we examine how HGNNs compute node embeddings to be taught the connection between supply and goal distributions.

HGNNs combination related node embeddings to enhance a goal node’s embeddings in every layer. In different phrases, the node embeddings for each supply and goal node varieties are up to date utilizing the identical enter — the earlier layer’s node embeddings of any related node varieties. Because of this they are often represented by one another. We show this relationship theoretically and discover there’s a mapping matrix (outlined by HGNN parameters) from the goal area to the supply area (extra particulars in Theorem 1 within the paper). Based mostly on this theorem, we introduce an auxiliary neural community, which we discuss with as a Information Switch Community (KTN), that receives the goal node embeddings after which transforms them by multiplying them with a (trainable) mapping matrix. We then outline a regularizer that’s minimized together with the efficiency loss within the pre-training part to coach the KTN. At take a look at time, we map the goal embeddings computed from the pre-trained HGNN to the supply area utilizing the skilled KTN for classification.

Experimental outcomes

To look at the effectiveness of KTNs, we ran 18 totally different zero-shot switch studying duties on two public heterogeneous graphs, Open Tutorial Graph and Pubmed. We examine KTN with eight state-of-the-art switch studying strategies (DAN, JAN, DANN, CDAN, CDAN-E, WDGRL, LP, EP). Proven under, KTN persistently outperforms all baselines on all duties, beating switch studying baselines by as much as 140% (as measured by Normalized Discounted Cumulative Acquire, a rating metric).

|

|

| Zero-shot switch studying on Open Tutorial Graph (OAG-CS) and Pubmed datasets. The colours symbolize totally different classes of switch studying baselines towards which the outcomes are in contrast. Yellow: Use statistical properties (e.g., imply, variance) of distributions. Inexperienced: Use adversarial fashions to switch information. Orange: Switch information immediately through graph construction utilizing label propagation. |

Most significantly, KTN may be utilized to nearly all HGNN fashions which have node and edge type-specific parameters and enhance their zero-shot efficiency heading in the right direction domains. As proven under, KTN improves accuracy on zero-labeled node varieties throughout six totally different HGNN fashions(R-GCN, HAN, HGT, MAGNN, MPNN, H-MPNN) by as much as 190%.

|

| KTN may be utilized to 6 totally different HGNN fashions and enhance their zero-shot efficiency heading in the right direction domains. |

Takeaways

Numerous ecosystems in business may be introduced as heterogeneous graphs. HGNNs summarize heterogeneous graph data into efficient representations. Nonetheless, label shortage points on sure varieties of nodes stop the broader software of HGNNs. On this publish, we launched KTN, the primary cross-type switch studying methodology designed for HGNNs. With KTN, we are able to totally exploit the richness of heterogeneous graphs through HGNNs no matter label shortage. See the paper for extra particulars.

Acknowledgements

This paper is joint work with our co-authors John Palowitch (Google Analysis), Dustin Zelle (Google Analysis), Ziniu Hu (Intern, Google Analysis), and Russ Salakhutdinov (CMU). We thank Tom Small for creating the animated determine on this weblog publish.