Google Vizier is the de-facto system for blackbox optimization over goal capabilities and hyperparameters throughout Google, having serviced a few of Google’s largest analysis efforts and optimized a variety of merchandise (e.g., Search, Adverts, YouTube). For analysis, it has not solely diminished language mannequin latency for customers, designed pc architectures, accelerated {hardware}, assisted protein discovery, and enhanced robotics, but additionally offered a dependable backend interface for customers to seek for neural architectures and evolve reinforcement studying algorithms. To function on the scale of optimizing 1000’s of customers’ important techniques and tuning thousands and thousands of machine studying fashions, Google Vizier solved key design challenges in supporting various use instances and workflows, whereas remaining strongly fault-tolerant.

At present we’re excited to announce Open Supply (OSS) Vizier (with an accompanying techniques whitepaper printed at AutoML Convention 2022), a standalone Python bundle primarily based on Google Vizier. OSS Vizier is designed for 2 important functions: (1) managing and optimizing experiments at scale in a dependable and distributed method for customers, and (2) creating and benchmarking algorithms for automated machine studying (AutoML) researchers.

System design

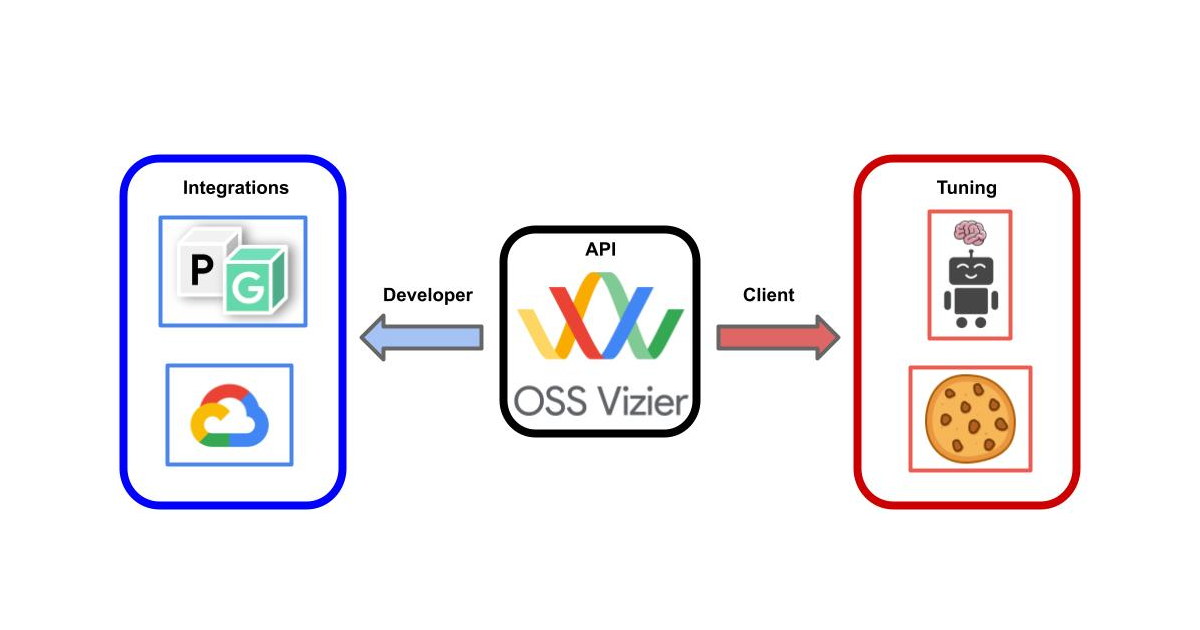

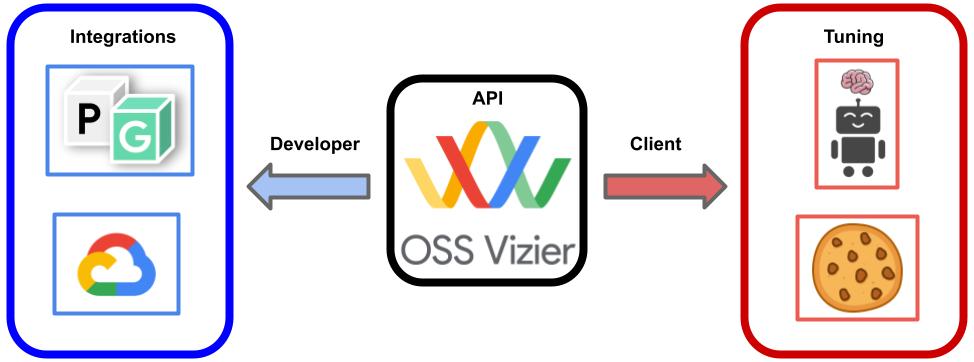

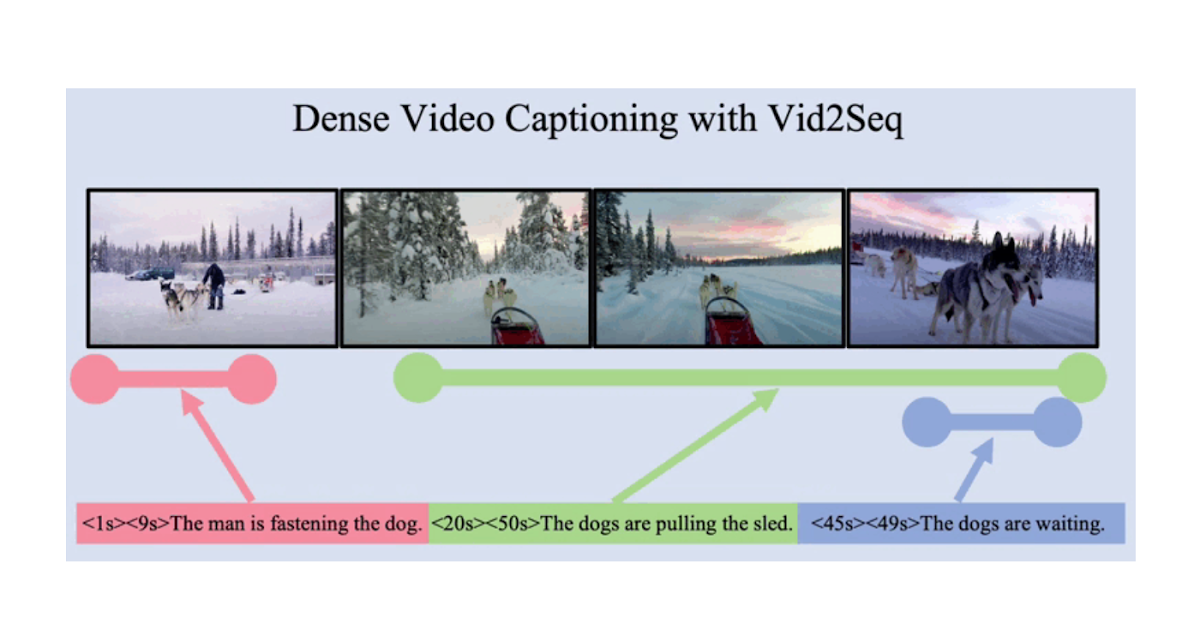

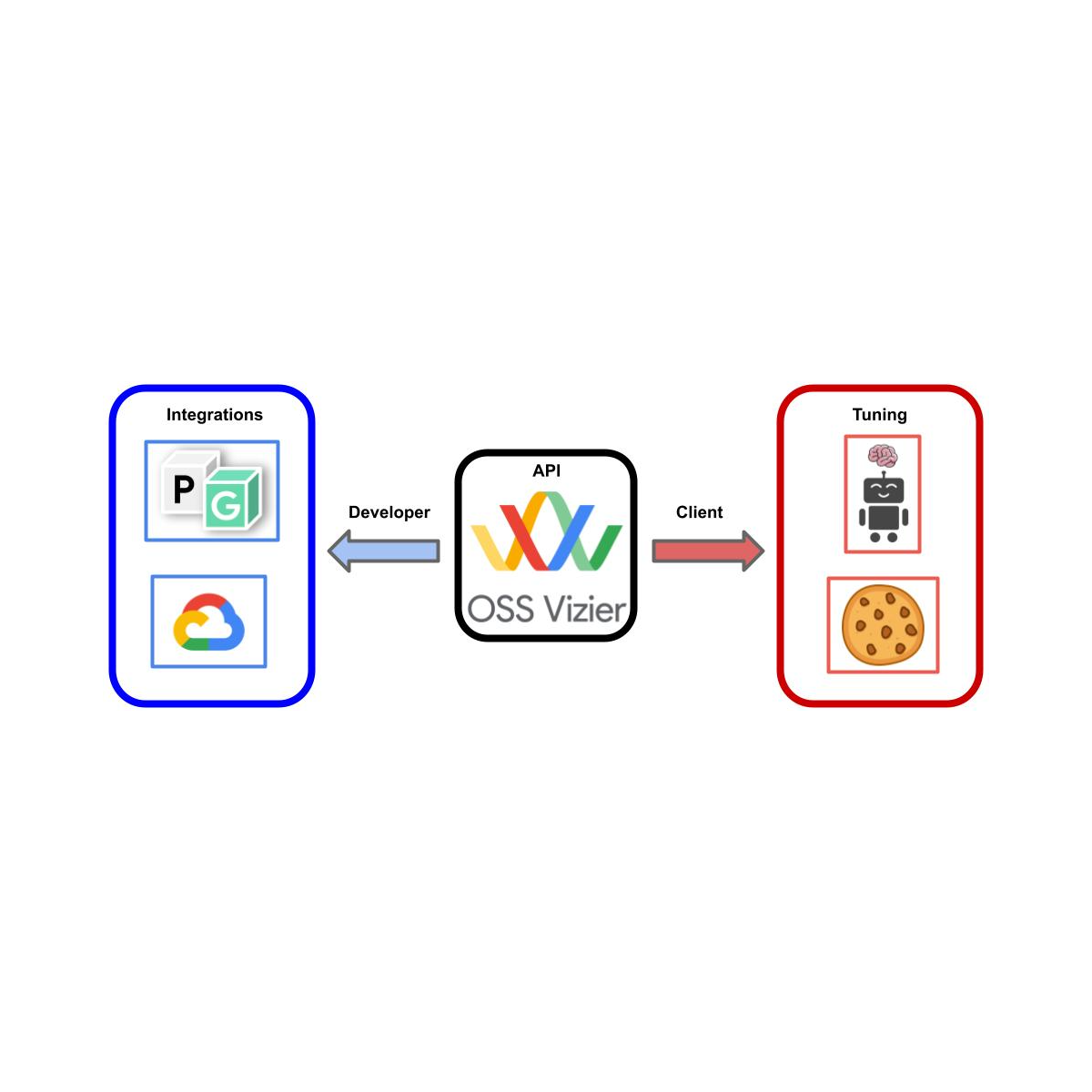

OSS Vizier works by having a server present companies, specifically the optimization of blackbox goals, or capabilities, from a number of shoppers. In the principle workflow, a shopper sends a distant process name (RPC) and asks for a suggestion (i.e., a proposed enter for the shopper’s blackbox perform), from which the service begins to spawn a employee to launch an algorithm (i.e., a Pythia coverage) to compute the next solutions. The solutions are then evaluated by shoppers to type their corresponding goal values and measurements, that are despatched again to the service. This pipeline is repeated a number of occasions to type a complete tuning trajectory.

Using the ever-present gRPC library, which is suitable with most programming languages, akin to C++ and Rust, permits most flexibility and customization, the place the person may write their very own customized shoppers and even algorithms exterior of the default Python interface. For the reason that complete course of is saved to an SQL datastore, a clean restoration is ensured after a crash, and utilization patterns will be saved as worthwhile datasets for analysis into meta-learning and multitask transfer-learning strategies such because the OptFormer and HyperBO.

Utilization

Due to OSS Vizier’s emphasis as a service, by which shoppers can ship requests to the server at any time limit, it’s thus designed for a broad vary of eventualities — the funds of evaluations, or trials, can vary from tens to thousands and thousands, and the analysis latency can vary from seconds to weeks. Evaluations will be carried out asynchronously (e.g., tuning an ML mannequin) or in synchronous batches (e.g., moist lab settings involving a number of simultaneous experiments). Moreover, evaluations might fail because of transient errors and be retried, or might fail because of persistent errors (e.g., the analysis is inconceivable) and shouldn’t be retried.

This broadly helps a wide range of purposes, which embody hyperparameter tuning deep studying fashions or optimizing non-computational goals, which will be e.g., bodily, chemical, organic, mechanical, and even human-evaluated, akin to cookie recipes.

Integrations, algorithms, and benchmarks

As Google Vizier is closely built-in with lots of Google’s inner frameworks and merchandise, OSS Vizier will naturally be closely built-in with lots of Google’s open supply and exterior frameworks. Most prominently, OSS Vizier will function a distributed backend for PyGlove to permit large-scale evolutionary searches over combinatorial primitives akin to neural architectures and reinforcement studying algorithms. Moreover, OSS Vizier shares the identical client-based API with Vertex Vizier, permitting customers to rapidly swap between open-source and production-quality companies.

For AutoML researchers, OSS Vizier can be outfitted with a helpful assortment of algorithms and benchmarks (i.e., goal capabilities) unified below frequent APIs for assessing the strengths and weaknesses of proposed strategies. Most notably, through TensorFlow Chance, researchers can now use the JAX-based Gaussian Course of Bandit algorithm, primarily based on the default algorithm in Google Vizier that tunes inner customers’ goals.

Sources and future route

We offer hyperlinks to the codebase, documentation, and techniques whitepaper. We plan to permit person contributions, particularly within the type of algorithms and benchmarks, and additional combine with the open-source AutoML ecosystem. Going ahead, we hope to see OSS Vizier as a core software for increasing analysis and growth over blackbox optimization and hyperparameter tuning.

Acknowledgements

OSS Vizier was developed by members of the Google Vizier staff in collaboration with the TensorFlow Chance staff: Setareh Ariafar, Lior Belenki, Emily Fertig, Daniel Golovin, Tzu-Kuo Huang, Greg Kochanski, Chansoo Lee, Sagi Perel, Adrian Reyes, Xingyou (Richard) Music, and Richard Zhang.

As well as, we thank Srinivas Vasudevan, Jacob Burnim, Brian Patton, Ben Lee, Christopher Suter, and Rif A. Saurous for additional TensorFlow Chance integrations, Daiyi Peng and Yifeng Lu for PyGlove integrations, Hao Li for Vertex/Cloud integrations, Yingjie Miao for AutoRL integrations, Tom Hennigan, Varun Godbole, Pavel Sountsov, Alexey Volkov, Mihir Paradkar, Richard Belleville, Bu Su Kim, Vytenis Sakenas, Yujin Tang, Yingtao Tian, and Yutian Chen for open supply and infrastructure assist, and George Dahl, Aleksandra Faust, Claire Cui, and Zoubin Ghahramani for discussions.

Lastly we thank Tom Small for designing the animation for this publish.