What’s Net Scraping and Why is it used?

Knowledge is a common want to unravel enterprise and analysis issues. Questionnaires, surveys, interviews, and types are all knowledge assortment strategies; nevertheless, they do not fairly faucet into the most important knowledge useful resource accessible. The Web is a big reservoir of knowledge on each believable topic. Sadly, most web sites don’t permit the choice to avoid wasting and retain the info which could be seen on their net pages. Net scraping solves this downside and permits customers to scrape giant volumes of the wanted knowledge.

Net scraping is the automated gathering of content material and knowledge from a web site or another useful resource accessible on the web. In contrast to display scraping, net scraping extracts the HTML code underneath the webpage. Customers can then course of the HTML code of the webpage to extract knowledge and perform knowledge cleansing, manipulation, and evaluation.

Exhaustive quantities of this knowledge may even be saved in a database for large-scale knowledge evaluation initiatives. The prominence and wish for knowledge evaluation, together with the quantity of uncooked knowledge which could be generated utilizing net scrapers, has led to the event of tailored python packages which make net scraping straightforward as pie.

Purposes of Net Scraping

- Sentiment evaluation: Whereas most web sites used for sentiment evaluation, equivalent to social media web sites, have APIs which permit customers to entry knowledge, this isn’t at all times sufficient. As a way to receive knowledge in real-time relating to data, conversations, analysis, and developments it’s usually extra appropriate to net scrape the info.

- Market Analysis: eCommerce sellers can monitor merchandise and pricing throughout a number of platforms to conduct market analysis relating to shopper sentiment and competitor pricing. This enables for very environment friendly monitoring of opponents and worth comparisons to keep up a transparent view of the market.

- Technological Analysis: Driverless vehicles, face recognition, and advice engines all require knowledge. Net Scraping usually provides priceless data from dependable web sites and is likely one of the most handy and used knowledge assortment strategies for these functions.

- Machine Studying: Whereas sentiment evaluation is a well-liked machine studying algorithm, it is just one among many. One factor all machine studying algorithms have in frequent, nevertheless, is the big quantity of knowledge required to coach them. Machine studying fuels analysis, technological development, and general progress throughout all fields of studying and innovation. In flip, net scraping can gasoline knowledge assortment for these algorithms with nice accuracy and reliability.

Understanding the Function of Selenium and Python in Scraping

Python has libraries for nearly any function a person can assume up, together with libraries for duties equivalent to net scraping. Selenium includes a number of totally different open-source initiatives used to hold out browser automation. It helps bindings for a number of in style programming languages, together with the language we will probably be utilizing on this article: Python.

Initially, Selenium with Python was developed and used primarily for cross-browser testing; nevertheless, over time extra artistic use circumstances equivalent to selenium and python net scrapping have been discovered.

Selenium makes use of the Webdriver protocol to automate processes on numerous in style browsers equivalent to Firefox, Chrome, and Safari. This automation could be carried out domestically (for functions equivalent to testing an online web page) or remotely (for net scraping).

Instance: Net Scraping the Title and all Cases of a Key phrase from a Specified URL

The overall course of adopted when performing net scraping is:

- Use the webdriver for the browser getting used to get a particular URL.

- Carry out automation to acquire the data required.

- Obtain the content material required from the webpage returned.

- Carry out knowledge parsing and manipulation on the content material.

- Reformat, if wanted, and retailer the info for additional evaluation.

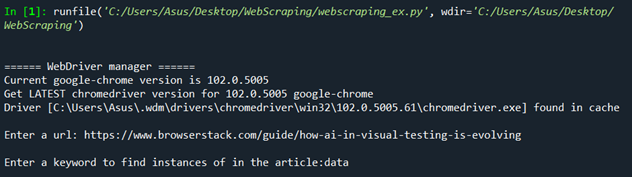

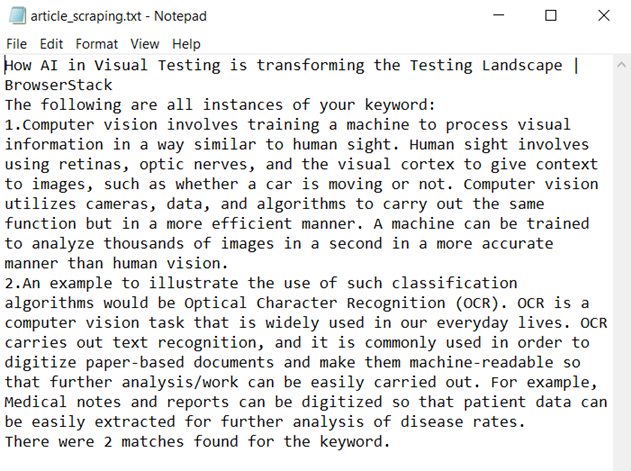

On this instance, person enter is taken for the URL of an article. Selenium is used together with BeautifulSoup to scrape after which perform knowledge manipulation to acquire the title of the article, and all cases of a person enter key phrase present in it. Following this, a rely is taken of the variety of circumstances discovered of the key phrase, and all this textual content knowledge is saved and saved in a textual content file known as article_scraping.txt.

How one can carry out Net Scraping utilizing Selenium and Python

Pre-Requisites:

- Arrange a Python Surroundings.

- Set up Selenium v4. You probably have conda or anaconda arrange then utilizing the pip package deal installer can be probably the most environment friendly methodology for Selenium set up. Merely run this command (on anaconda immediate, or immediately on the Linux terminal):

pip set up selenium

- Obtain the newest WebDriver for the browser you want to use, or set up webdriver_manager by working the command, additionally set up BeautifulSoup:

pip set up webdriver_manager

pip set up beautifulsoup4

Step 1: Import the required packages.

from selenium import webdriverfrom selenium.webdriver.chrome.service import Servicefrom selenium.webdriver.help.ui import WebDriverWaitfrom selenium.webdriver.help import expected_conditions as ECfrom bs4 import BeautifulSoupimport codecsimport refrom webdriver_manager.chrome import ChromeDriverManager

Selenium is required with a purpose to perform net scraping and automate the chrome browser we’ll be utilizing. Selenium makes use of the webdriver protocol, due to this fact the webdriver supervisor is imported to acquire the ChromeDriver appropriate with the model of the browser getting used. BeautifulSoup is required as an HTML parser, to parse the HTML content material we scrape. Re is imported with a purpose to use regex to match our key phrase. Codecs are used to jot down to a textual content file.

Step 2: Receive the model of ChromeDriver appropriate with the browser getting used.

driver=webdriver.Chrome(service=Service(ChromeDriverManager().set up()))

Step 3: Take the person enter to acquire the URL of the web site to be scraped, and net scrape the web page.

val = enter("Enter a url: ")wait = WebDriverWait(driver, 10)driver.get(val)

get_url = driver.current_url

wait.till(EC.url_to_be(val))

if get_url == val:

page_source = driver.page_source

The motive force is used to get this URL and a wait command is used with a purpose to let the web page load. Then a examine is completed utilizing the present URL methodology to make sure that the right URL is being accessed.

Step 4: Use BeautifulSoup to parse the HTML content material obtained.

soup = BeautifulSoup(page_source,options="html.parser")

key phrase=enter("Enter a key phrase to search out cases of within the article:")

matches = soup.physique.find_all(string=re.compile(key phrase))len_match = len(matches)title = soup.title.textual content

The HTML content material net scraped with Selenium is parsed and made right into a soup object. Following this, person enter is taken for a key phrase for which we are going to search the article’s physique. The key phrase for this instance is “knowledge“. The physique tags within the soup object are looked for all cases of the phrase “knowledge” utilizing regex. Lastly, the textual content within the title tag discovered inside the soup object is extracted.

Step 4: Retailer the info collected right into a textual content file.

file=codecs.open('article_scraping.txt', 'a+')file.write(title+"n")file.write("The next are all cases of your key phrase:n")rely=1for i in matches:file.write(str(rely) + "." + i + "n")rely+=1file.write("There have been "+str(len_match)+" matches discovered for the key phrase."file.shut()driver.give up()

Use codecs to open a textual content file titled article_scraping.txt and write the title of the article into the file, following this quantity, and append all cases of the key phrase inside the article. Lastly, append the variety of matches discovered for the key phrase within the article. Shut the file and give up the motive force.

Output:

Textual content File Output:

The title of the article, the 2 cases of the key phrase, and the variety of matches discovered could be visualized on this textual content file.

How one can use tags to effectively accumulate knowledge from web-scraped HTML pages:

print([tag.name for tag in soup.find_all()])print([tag.text for tag in soup.find_all()])

The above code snippet can be utilized to print all of the tags discovered within the soup object and all textual content inside these tags. This may be useful to debug code or find any errors and points.

Different Options of Selenium with Python

You should utilize a few of Selenium’s inbuilt options to hold out additional actions or maybe automate this course of for a number of net pages. The next are among the most handy options supplied by Selenium to hold out environment friendly Browser Automation and Net Scraping with Python:

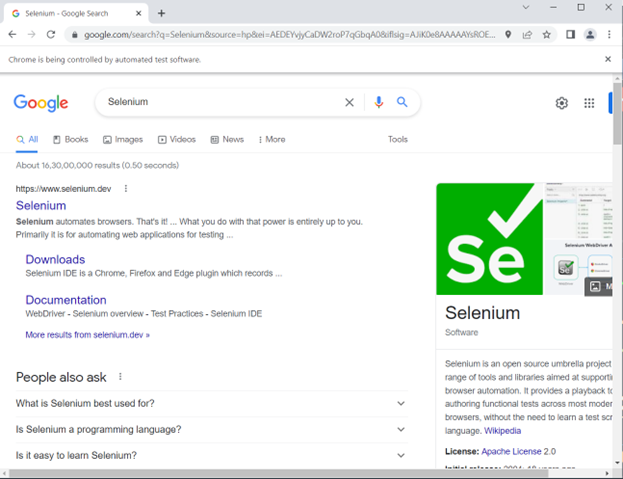

- Filling out types or finishing up searches

Instance of Google search automation utilizing Selenium with Python.

from selenium import webdriverfrom selenium.webdriver.chrome.service import Servicefrom webdriver_manager.chrome import ChromeDriverManagerfrom selenium.webdriver.frequent.keys import Keysfrom selenium.webdriver.frequent.by import By

driver = webdriver.Chrome(service=Service(ChromeDriverManager().set up()))

driver.get("https://www.google.com/")search = driver.find_element(by=By.NAME,worth="q")search.send_keys("Selenium")search.send_keys(Keys.ENTER)

First, the motive force hundreds google.com, which finds the search bar utilizing the title locator. It varieties “Selenium” into the searchbar after which hits enter.

Output:

- Maximizing the window

driver.maximize_window()

- Taking Screenshots

driver.save_screenshot('article.png')

- Utilizing locators to search out components

As an instance we do not need to get your complete web page supply and as a substitute solely need to net scrape a choose few components. This may be carried out by utilizing Locators in Selenium.

These are among the locators appropriate to be used with Selenium:

- Title

- ID

- Class Title

- Tag Title

- CSS Selector

- XPath

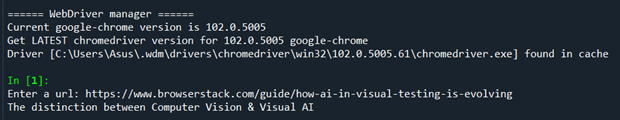

Instance of scraping utilizing locators:

from selenium import webdriverfrom selenium.webdriver.chrome.service import Servicefrom selenium.webdriver.help.ui import WebDriverWaitfrom selenium.webdriver.help import expected_conditions as ECfrom selenium.webdriver.frequent.by import Byfrom webdriver_manager.chrome import ChromeDriverManager

driver = webdriver.Chrome(service=Service(ChromeDriverManager().set up()))

val = enter("Enter a url: ")

wait = WebDriverWait(driver, 10)driver.get(val)get_url = driver.current_urlwait.till(EC.url_to_be(val))if get_url == val:header=driver.find_element(By.ID, "toc0")print(header.textual content)

This instance’s enter is identical article because the one in our net scraping instance. As soon as the webpage has loaded the ingredient we wish is immediately retrieved through ID, which could be discovered by utilizing Examine Factor.

Output:

The title of the primary part is retrieved by utilizing its locator “toc0” and printed.

- Scrolling

driver.execute_script("window.scrollTo(0, doc.physique.scrollHeight);")

This scrolls to the underside of the web page and is usually useful for web sites which have infinite scrolling.

Conclusion

This information defined the method of Net Scraping, Parsing, and Storing the Knowledge collected. It additionally explored Net Scraping particular components utilizing locators in Python with Selenium. Moreover, it offered steering on the right way to automate an online web page in order that the specified knowledge could be retrieved. The data offered ought to show to be of service to hold out dependable knowledge assortment and carry out insightful knowledge manipulation for additional downstream knowledge evaluation.

It’s endorsed to run Selenium Assessments on an actual machine cloud for extra correct outcomes because it considers actual person circumstances whereas working checks. With BrowserStack Automate, you possibly can entry 3000+ actual device-browser combos and check your net software completely for a seamless and constant person expertise.

The publish How one can carry out Net Scraping utilizing Selenium and Python appeared first on Datafloq.